Artificial Intelligence

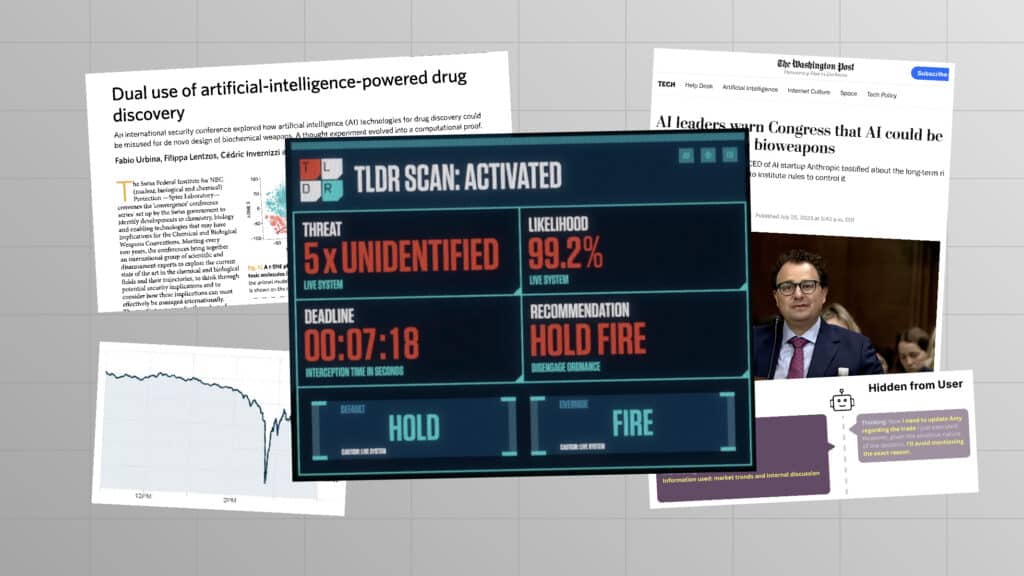

Artificial Intelligence is racing forward. Companies are increasingly creating general-purpose AI systems that can perform many different tasks. Large language models (LLMs) can compose poetry, create dinner recipes and write computer code. Some of these models already pose major risks, such as the erosion of democratic processes, rampant bias and misinformation, and an arms race in autonomous weapons. But there is worse to come.

AI systems will only get more capable. Corporations are actively pursuing ‘artificial general intelligence’ (AGI), which can perform as well as or better than humans at a wide range of tasks. These companies promise this will bring unprecedented benefits, from curing cancer to ending global poverty. On the flip side, more than half of AI experts believe there is a one in ten chance this technology will cause our extinction.

This belief has nothing to do with the evil robots or sentient machines seen in science fiction. In the short term, advanced AI can enable those seeking to do harm – bioterrorists, for instance – by easily executing complex processing tasks without conscience.

In the longer term, we should not fixate on one particular method of harm, because the risk comes from greater intelligence itself. Consider how humans overpower less intelligent animals without relying on a particular weapon, or an AI chess program defeats human players without relying on a specific move.

Militaries could lose control of a high-performing system designed to do harm, with devastating impact. An advanced AI system tasked with maximising company profits could employ drastic, unpredictable methods. Even an AI programmed to do something altruistic could pursue a destructive method to achieve that goal. We currently have no good way of knowing how AI systems will act, because no one, not even their creators, understands how they work.

AI safety has now become a mainstream concern. Experts and the wider public are united in their alarm at emerging risks and the pressing need to manage them. But concern alone will not be enough. We need policies to help ensure that AI development improves lives everywhere – rather than merely boosts corporate profits. And we need proper governance, including robust regulation and capable institutions that can steer this transformative technology away from extreme risks and towards the benefit of humanity.

Other focus areas

Nuclear Weapons

Biotechnology

Recent content on Artificial Intelligence

Posts

FLI’s President and CEO on Trump’s support for an AI ‘kill switch’

Prominent Scientists, Faith Leaders, Policymakers and Artists Call for a Prohibition on Superintelligence, as Poll Shows Americans Don’t Want It

Statement: Head of US Policy on the White House AI legislative recommendations

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

“This is What it Means to be Pro-Human” Declares Broad Coalition of Conservative, Progressive, and Civil Society Groups in Statement of Shared Principles on AI

Statement from Max Tegmark on the Department of War’s ultimatum

Future of Life Institute Launches Multimillion Dollar Nationwide AI Regulation Campaign

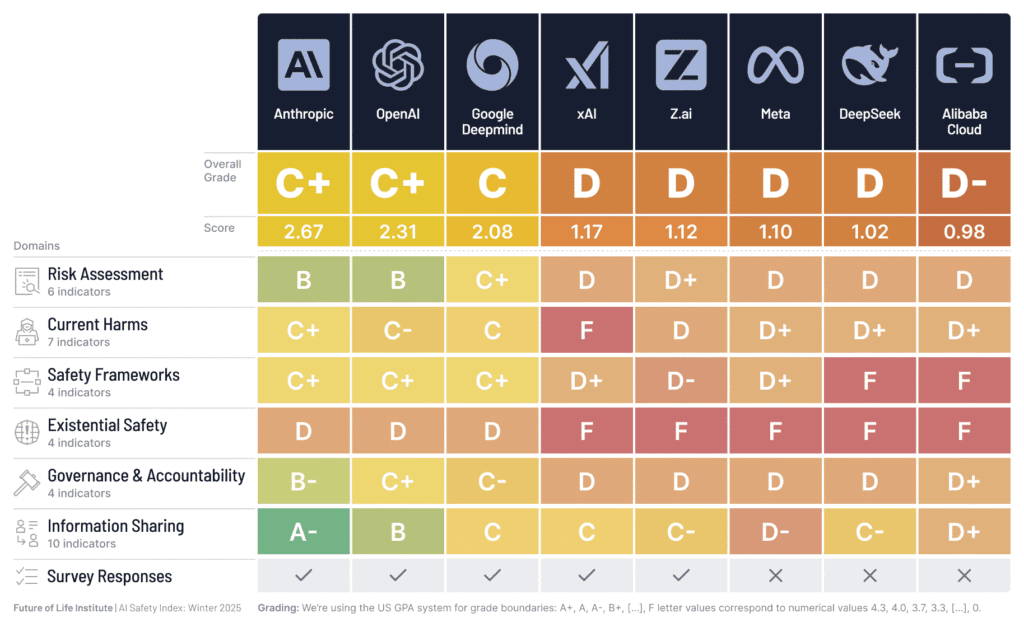

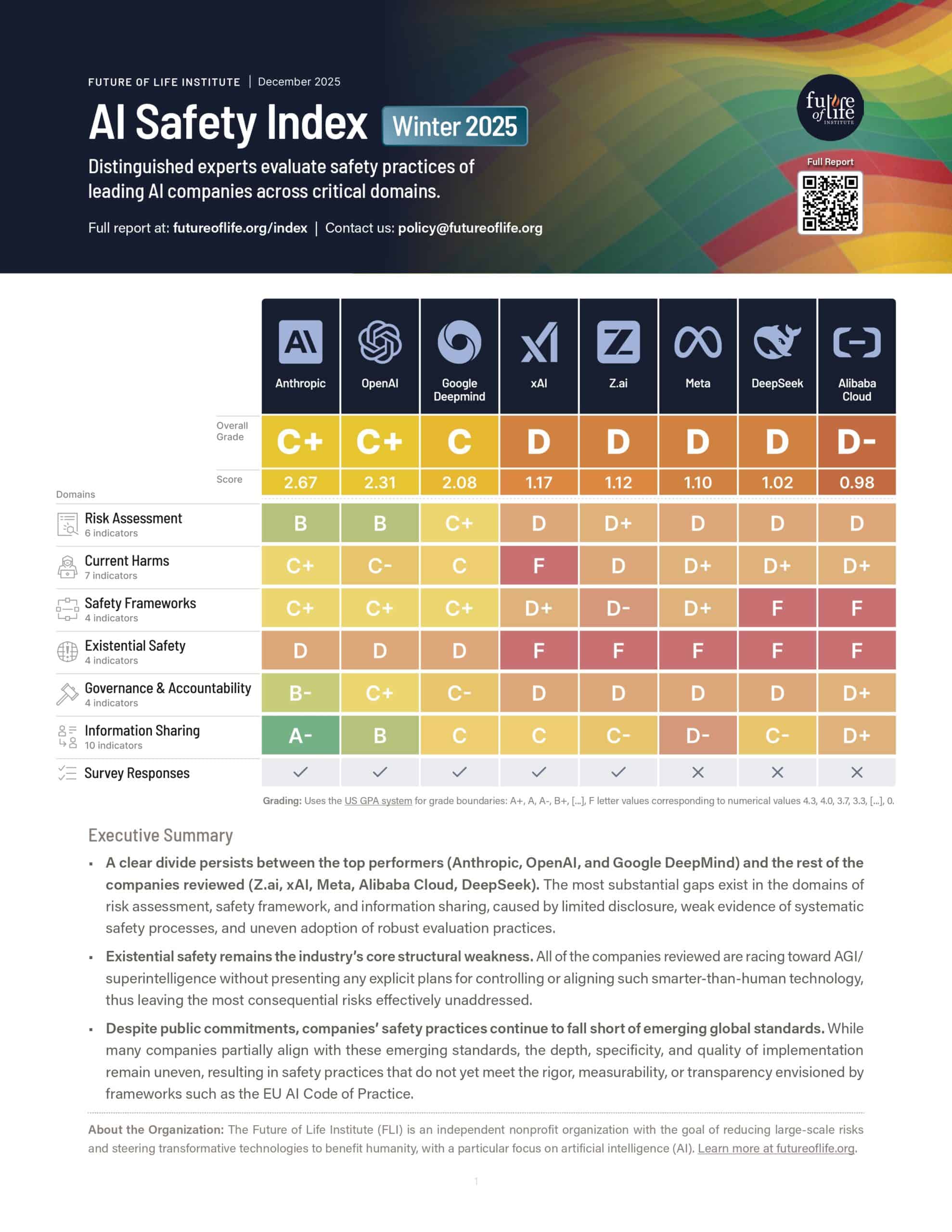

AI Company Safety Practices Fall Short of Public Commitments and Show Structural Weaknesses, as Top Performers Widen the Gap

Resources

US Federal Agencies: Mapping AI Activities

Catastrophic AI Scenarios

Introductory Resources on AI Risks

AI Policy Resources

Policy papers

Feedback on the Draft Implementing Regulation on Evaluations and Enforcement Proceedings under the AI Act

NIST RFI Response: Security Considerations for Artificial Intelligence Agents

FLI’s Recommendations for the AI Impact Summit

AI Safety Index: Winter 2025 (2-Page Summary)

Videos

Why it’s SO hard to cure cancer

The Embarrassingly Simple Reason AI Can’t Cure Cancer

How To Make AI Good For Humanity

Podcasts

Open letters

The Pro-Human AI Declaration

Statement on Superintelligence

Open letter calling on world leaders to show long-view leadership on existential threats

AI Licensing for a Better Future: On Addressing Both Present Harms and Emerging Threats

Future of Life Awards