FAQs about FLI’s Open Letter Calling for a Pause on Giant AI Experiments

Contents

Disclaimer: Please note that these FAQ’s have been prepared by FLI and do not necessarily reflect the views of the signatories to the letter. This is a live document that will continue to be updated in response to questions and discussion in the media and elsewhere.

These FAQs are in reference to: ‘Pause Giant AI Experiments: An Open Letter‘.

What does your letter call for?

We’re calling for a pause on the training of models larger than GPT-4 for 6 months.

This does not imply a pause or ban on all AI research and development or the use of systems that have already been placed in the market.

Our call is specific and addresses a very small pool of actors who possess this capability.

What has the reaction to the letter been?

The reaction has been intense. We feel that it has given voice to a huge undercurrent of concern about the risks of high-powered AI systems not just at the public level, but top researchers in AI and other topics, business leaders, and policymakers. As of writing the letter has been signed by over 1800 CEOs and over 1500 professors.

The letter has also generated intense media coverage, and added to momentum for action that goes far beyond FLI’s efforts. In the same week as the letter, the Center for Artificial Intelligence and Digital Policy has asked the FTC to stop new GPT releases, and UNESCO has called on world governments to enact a global ethical AI framework.

Who wrote the letter?

FLI staff, in consultation with AI experts such as Yoshua Bengio and Stuart Russell, and heads of several AI-focused NGOs, composed the letter. The other signatories did not participate in its development and only found out about the letter when asked to sign.

Doesn’t your letter just fuel AI hype?

Current AI systems are becoming quite general and already human-competitive at a very wide variety of tasks. This is itself an extremely important development.

Many of the world’s leading AI scientists also think more powerful systems – often called “Artificial General Intelligence” (AGI) – that are competitive with humanity’s best at almost any task, are achievable, and this is the stated goal of many commercial AI labs.

From our perspective, there is no natural law or barrier to technical progress that prohibits this. We therefore operate under the assumption that AGI is possible and sooner than many expected.

Perhaps we, and many others, are wrong about this. But if we are not, the implications for our society are profound.

What if I still think AGI is hype?

That’s OK – this is a polarizing issue even for scientists who work on AI every day.

Regardless how we name it, the fact is AI systems are growing ever more powerful – and we don’t know their upper bound. Malicious actors can use these systems to do bad things, and regular consumers might be misled, or worse, by their output.

In 2022, researchers showed that a model meant to generate therapeutic drugs could be used to generate novel biochemical weapons instead. OpenAI’s system card documented how GPT-4 could be prompted into tricking a TaskRabbit worker to complete Captcha verification. More recently, a tragic news report covered how an individual committed suicide after a “conversation” with a GPT clone.

Public comments by leaders of labs like OpenAI, Anthropic, and Deepmind have all acknowledged these risks and have called for regulation.

We also believe they themselves would like a pause, but might feel competitive pressure to continue training and deploying larger models. If nothing else, a public commitment to a pause by all labs will alleviate that pressure and encourage transparency and cooperation.

Does this mean that you aren’t concerned about present harms?

Absolutely not. The use of AI systems – of any capacity – create harms such as discrimination and bias, misinformation, the concentration of economic power, adverse impact on labor, weaponization, and environmental degradation.

We acknowledge and reaffirm that these harms are also deeply concerning and merit work to solve. We are grateful to the work of many scholars, business leaders, regulators, and diplomats continually working to surface them at the national and international level. FLI has supported governance initiatives that address the harms mentioned above, including the NIST AI RMF, the FTC’s action on misleading AI claims, the EU AI Act and Liability Directive, and arms control for autonomous weapons at the UN and other fora.

It is not possible to enjoy positive futures with AI if products are not safe or harm human rights and international stability.

These are not mutually exclusive challenges. For FLI, it is important that all concerned stakeholders be able to contribute to the effort of making AI and AGI beneficial for humanity.

What do you expect in 6 months? After all, we don’t even know what “more powerful than GPT-4” means.

Most researchers agree that currently, the total amount of computation that goes into a training run is a good proxy for how powerful it is. So a pause could, for example, apply to training runs exceeding some threshold. Our letter, among other things, calls for the oversight and tracking of highly capable AI systems and large pools of computational capability.

The central hope is that the AI companies currently racing each other will take a pause to instead coordinate on how they can proceed more safely. We have also listed 6 other governance responses in our open letter.

We are aware that these responses involve coordination across multiple stakeholders and that more needs to be done. This will undoubtedly take longer than 6 months.

But our letter and proposed pause can help channel the political will to address them. We’ve already seen this happening – UNESCO has leveraged our letter to urge member states to make faster progress on its global AI ethics principles.

Won’t a pause slow down progress and allow other countries to catch up?

This pause only concerns frontier runs by the largest of AI development efforts. Amongst these, nobody “wins” by launching –or even developing – super-powerful, unpredictable, and potentially uncontrollable AI systems. A six-month pause is not long enough for others to “catch-up.”

Have we really done something like this before?

Look no further than the 1975 Asilomar Conference on Recombinant DNA. Due to potential safety hazards in the 60’s and 70’s, scientists had halted experiments using recombinant DNA technology.

The conference allowed leading scientists and government experts to prohibit certain experiments and design rules that would allow actors to safely research this technology, leading to huge progress in biotechnology.

Sometimes stepping back to reassess and reevaluate can engineer trust between scientists and the societies they operate in and subsequently accelerate progress.

What’s going on with your signatories? Some have hedged their responses, and others claim they never signed.

Not all our signatories agree with us a hundred percent – that’s OK. AI progress is rapid and is forcing our societies to adapt with incredible speed. We don’t think full consensus on all the difficult issues is possible. We are glad to see that, despite some disagreement, many of the world’s leading AI scientists have signed this letter.

We also confronted some verification challenges because our embargoed letter was shared on social media earlier than it should have. Some individuals were incorrectly and maliciously added to the list before we were prepared to publish widely. We have now improved our process and all signatories that appear on top of the list are genuine.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

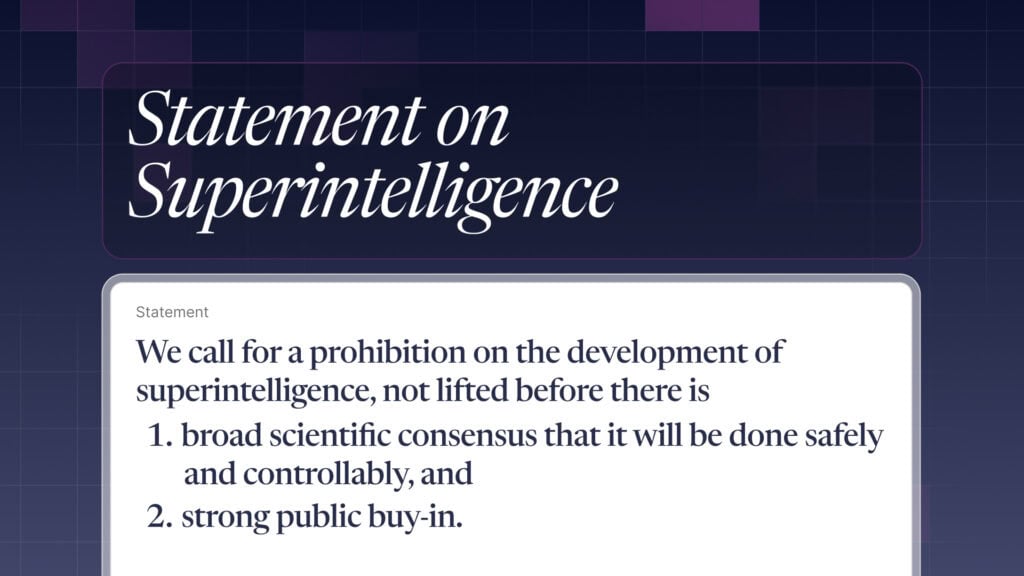

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI

Some of our Policy & Research projects

Control Inversion