Latest Episodes

Great podcast on initiatives that are critical for our future.

Lucas/FLI do an excellent job of conducting in-depth interviews with incredible people whose work stands to radically impact humanity’s future. It’s a badly missing and needed resource in today’s world, is always high-quality, and I'm able to learn something new/unique/valuable each time. Great job to Lucas and team!

Amazing Podcast !

People need to know about this excellent podcast (and the Future of Life Institute) focusing on the most important issues facing the world. The topics are big, current, and supremely important; the guests are luminaries in their fields; and the Interviewer, Lucas Perry, brings it all forth in such a compelling way [...]

Great show!

Lucas, host of the Future of Life podcast, highlights all aspects of tech and more in this can’t miss podcast! The host and expert guests offer insightful advice and information that is helpful to anyone that listens!

Science-smart interviewer asks very good questions!

Great, in depth interviews.

Amazing listening...

What a wonderfully informative podcast. The most important issues for life's future are addressed here with great lucidity.

Sequences

Limited-series podcasts on pressing issues

Throughout the running history of our podcast we have produced a couple of special 'sequences', series of episodes focused on tackling a pressing issue in more detail. Below you can find the sequences we have produced so far:

29 episodes

AI Alignment Podcast

This podcast series covers and explores the AI alignment problem across a large variety of domains, reflecting the fundamentally interdisciplinary nature of AI alignment.

Running from 2018-2020.

27 episodes

Not Cool

Hear directly from scientists and experts on the causes and impacts of climate change and the action that's needed going forward.

Running from September - November 2019.

8 episodes

Imagine A World

Can you imagine a world in 2045 where we manage to avoid the climate crisis, major wars, and the potential harms of artificial intelligence?

Running from September - October 2023.

Episode archive

All of our stories and interviews

Filter by search

Sort order

Sequences

Category

Number of results

23 May, 2025

Facing Superintelligence (with Ben Goertzel)

Play

13 March, 2025

Keep the Future Human (with Anthony Aguirre)

Play

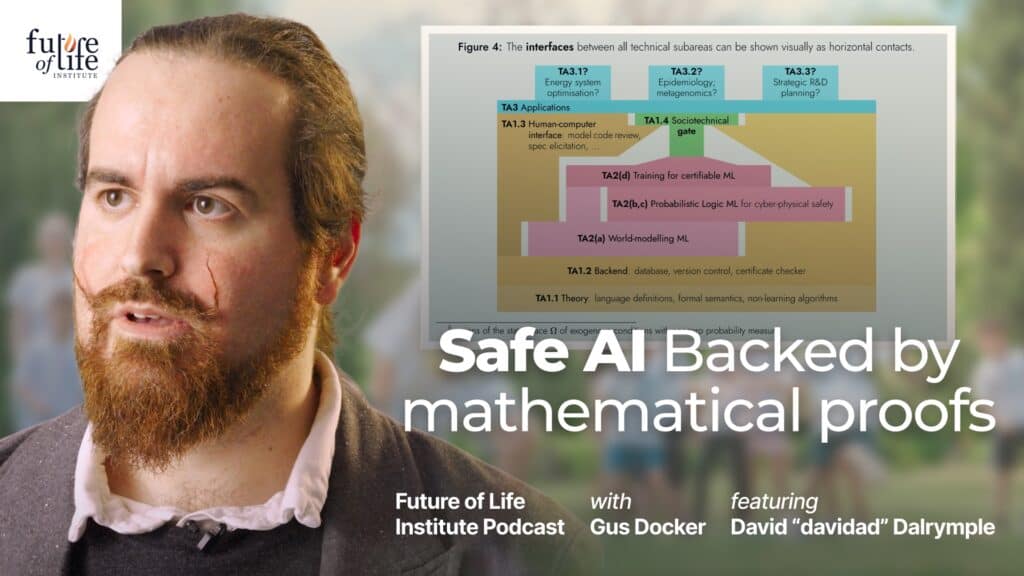

9 January, 2025

David Dalrymple on Safeguarded, Transformative AI

Play

Load more

Our work

Other projects in this area

We work on a range of projects across a few key areas. See some of our other projects in this area of work:

Multistakeholder Engagement for Safe and Prosperous AI

FLI is launching new grants to educate and engage stakeholder groups, as well as the general public, in the movement for safe, secure and beneficial AI.

Digital Media Accelerator

The Digital Media Accelerator supports digital content from creators raising awareness and understanding about ongoing AI developments and issues.

Our work