Gradual AI Disempowerment

This is only one of several ways that AI could go wrong. See our overview of Catastrophic AI Scenarios for more. Also see our Introductory Resources on AI Risks.

You have probably heard lots of concerning things about AI. One trope is that AI will turn us all into paperclips. Top AI scientists and CEOs of the leading AI companies signed a statement warning about “risk of extinction from AI“. Wait – do they really think AI will turn us into paper clips? No, no one thinks that. Will we be hunted down by robots that look suspiciously like Arnold Schwarzenegger? Again, probably not. But the risk of extinction is real. One potential path is gradual, with no single dramatic moment.

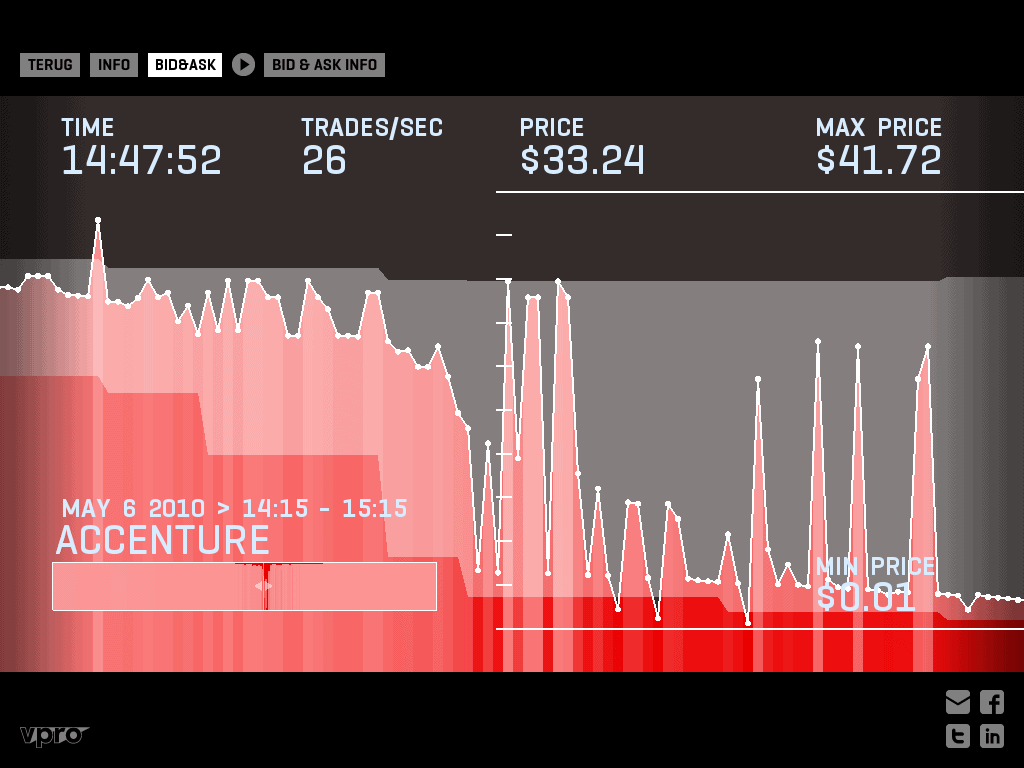

We have already experienced the risks of handing control to algorithms. Remember the 2010 flash crash? Algorithms wiped a trillion dollars off the stock market in the blink of an eye. No one on Wall Street wanted to tank the market. The algorithms simply moved too fast for human oversight.

Now take the recent advances in AI, and extrapolate into the future. We have already seen a company appoint an AI as its CEO. If AI keeps up its recent pace of advancement, this kind of thing will become much more common. Companies will be forced to adopt AI managers, or risk losing out to those who do.

It’s not just the corporate world. AI will creep into our political machinery. Today, this involves AI-based voter targeting. Future AIs will be integrated into strategic decisions like crafting policy platforms and swaying candidate selection. Competitive pressure will leave politicians with no choice: Parties that effectively leverage AI will win elections. Laggards will lose.

None of this requires AI to have feelings or consciousness. Simply giving AI an open-ended goal like “increase sales” is enough to set us on this path. Maximizing an open-ended goal will implicitly push the AI to seek power because more power makes achieving goals easier. Experiments have shown AIs learn to grab resources in a simulated world, even when this was not in their initial programming. More powerful AIs unleashed on the real world will similarly grab resources and power.

History shows social takeovers can be gradual. Hitler did not become a dictator overnight. Nor did Putin. Both initially gained power through democratic processes. They consolidated control by incrementally removing checks and balances and quashing independent institutions. Nothing is stopping AI from taking a similar path.

You may wonder if this requires super-intelligent AI beyond comprehension. Not necessarily. AI already has key advantages: it can duplicate infinitely, run constantly, read every book ever written, and make decisions faster than any human. AI could be a superior CEO or politician without being strictly “smarter” than humans.

We can’t count on simply “hitting the off switch.” A marginally more advanced AI will have many ways to exert power in the physical world. It can recruit human allies. It can negotiate with humans, using the threat of cyberattacks or bio-terror. AI can already design novel bio-weapons and create malware.

Will AI develop a vendetta against humanity? Probably not. But consider the tragic tale of the Tecopa pupfish. It wasn’t overfished – humans merely thought their hot spring habitat was ideal for a resort. Extinction was incidental. Humanity has a key advantage over the pupfish: We can decide if and how to develop more powerful AI. Given the stakes, it is critical we prove more powerful AI will be safe and beneficial before we create it.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI, Existential Risk

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI

Some of our Futures projects

Control Inversion