Highlights

FLI Highlights

2018

2nd AI Grants Competition

FLI launched our second AI safety grants competition at the start of 2018. The focus of this RFP is on technical research or other projects enabling development of AI that is beneficial to society and robust in the sense that the benefits have some guarantees: our AI systems must do what we want them to do.

For many years, artificial intelligence (AI) research has been appropriately focused on the challenge of making AI effective, with significant recent success, and great future promise. This recent success has raised an important question: how can we ensure that the growing power of AI is matched by the growing wisdom with which we manage it?

2017

Ethics of Value Alignment Workshop

Lucas Perry, Meia Chita-Tegmark & Max Tegmark organized a one-day workshop with the Berggruen Institute & CIFAR on the the Ethics of Value Alignment right after NIPS, where AI-researchers, philosophers and other thought-leaders brainstormed about promising research directions. For example, if the technical value-alignment problem can be solved, then what values should AI be aligned with and through what process should these values be selected?

Launch of autonomousweapons.org

To build on the excitement surrounding the Slaughterbots video and the UN CCW’s meeting on autonomous weapons, FLI created a new website to help concerned citizens learn more about threats from autonomous weapons and how they can help. Within the first few weeks, hundreds of thousands of people visited the site.

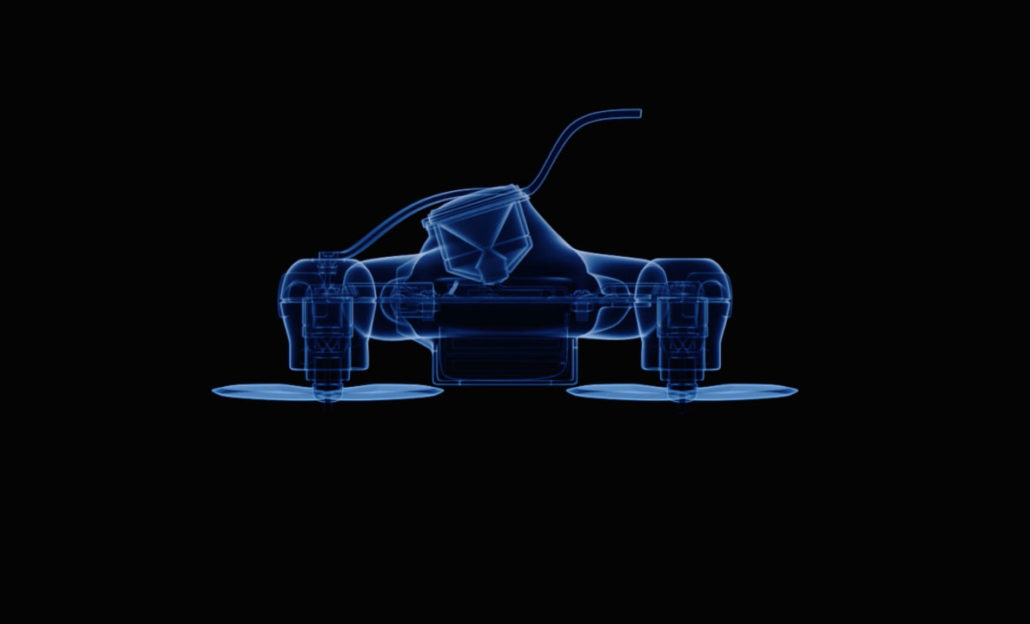

Slaughterbots

In November ahead of the UN’s meeting on lethal autonomous weapons, or “killer robots”, AI researcher and FLI advisor Stuart Russell released a short film detailing the dystopian nightmare that could result from palm-sized killer drones. It may seem like science fiction, but many of these technologies already exist today. FLI worked with Russell to create the film, which quickly went viral and has received over 60 million views across different platforms.

Open Letter to the United Nation Convention on Certain Conventional Weapons

In order to build momentum for the UN’s meeting to discuss the legal status of autonomous weapons, FLI supported Toby Walsh’s open letter to companies involved in artificial intelligence and robotics, urging them to support the negotiations to ban autonomous weapons.

The letter reads: “Lethal autonomous weapons threaten to become the third revolution in warfare. Once developed, they will permit armed conflict to be fought at a scale greater than ever, and at timescales faster than humans can comprehend. These can be weapons of terror, weapons that despots and terrorists use against innocent populations, and weapons hacked to behave in undesirable ways. We do not have long to act. Once this Pandora’s box is opened, it will be hard to close. We therefore implore the High Contracting Parties to find a way to protect us all from these dangers.”

Inaugural Future of Life Award: Vasili Arkhipov

On October 27, 1962, a soft-spoken naval officer named Vasili Arkhipov single-handedly prevented nuclear war during the height of the Cuban Missile Crisis. Arkhipov’s submarine captain, thinking their sub was under attack by American forces, wanted to launch a nuclear weapon at the ships above. Arkhipov, with the power of veto, said no, thus averting nuclear war.

55 years after his courageous actions, the Future of Life Institute has presented the Arkhipov family with the inaugural Future of Life Award and $50,000 to honor humanity’s late hero. Arkhipov’s surviving family members, represented by his daughter Elena and grandson Sergei, flew into London for the ceremony, which was held at the Institute of Engineering & Technology.

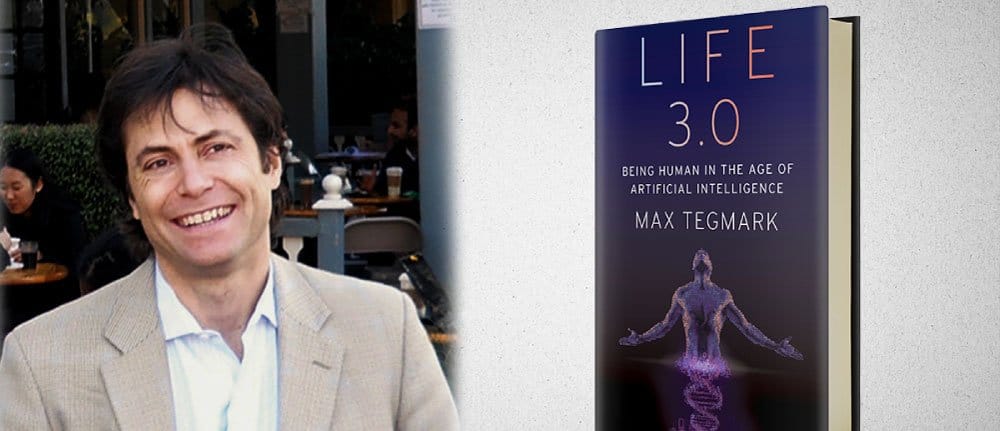

Max Tegmark Launches Life 3.0: Being Human in the Age of Artificial Intelligence

What will happen when machines surpass humans at every task? Max Tegmark’s New York Times bestseller, Life 3.0: Being Human in the Age of Artificial Intelligence, explores how AI will impact life as it grows increasingly advanced and more difficult to control.

AI will impact everyone, and Life 3.0 aims to expand the conversation around AI to include all people so that we can create a truly beneficial future. This page features the answers from the people who have taken the survey that goes along with Max’s book. To join the conversation yourself, please take the survey at ageofai.org.

United Nations Passes Treaty Banning Nuclear Weapons

Throughout 2017, FLI worked with the International Campaign to Abolish Nuclear Weapons (ICAN), PAX for Peace, and scientists across the world to push for an international ban on nuclear weapons. The ban successfully passed in July, when over 50 countries signed it and 3 ratified it. And while the ban won’t eliminate all nuclear arsenals overnight, it is a big step towards stigmatizing nuclear weapons, just as the ban on landmines stigmatized those controversial weapons and pushed the superpowers to slash their arsenals.

MIT Conference: Reducing the Threat of Nuclear Weapons

Alongside FLI’s efforts to stigmatize nuclear weapons and build support for their elimination, we hosted a one-day conference at MIT. Speakers included Iran-deal broker Ernie Moniz (MIT, fmr Secretary of Energy), California Congresswoman Barbara Lee, Lisbeth Gronlund (Union of Concerned Scientists), Joe Cirincione (Ploughshares), our former congressman John Tierney, MA state reps Denise Provost and Mike Connolly, and Cambridge Mayor Denise Simmons.

UN Ban on Nuclear Weapons Open Letter

At the initial UN negotiations to ban nuclear weapons in March, FLI presented a letter of support for the ban that has been signed by 3700 scientists from 100 countries – including 30 Nobel Laureates, Stephen Hawking, and former US Secretary of Defense William Perry.

“Scientists bear a special responsibility for nuclear weapons, since it was scientists who invented them and discovered that their effects are even more horrific than first thought”, the letter explains.

FLI also created a video highlighting scientific support for the nuclear ban, which was shown at the negotiations.

Beneficial AI Conference and the 23 Asilomar Principles

FLI kicked off 2017 with our Beneficial AI conference in Asilomar, where a collection of over 200 AI researchers, economists, psychologists, authors and other thinkers got together to discuss the future of artificial intelligence (videos here).

The conference produced a set of 23 Principles to guide the development of safe and beneficial AI. The Principles have been signed and supported by over 1200 AI researchers and 2500 others, including Elon Musk and Stephen Hawking.

But the Principles were just a start to the conversation. After the conference, Ariel began a series that looks at each principle in depth and provides insight from various AI researchers. Artificial intelligence will affect people across every segment of society, so we want as many people as possible to get involved. To date, tens of thousands of people have read these articles. You can read them all here and join the discussion!

2016

FLI Launches Monthly Podcast

In the fall of 2016, FLI launched a monthly podcast. Ariel interviews two experts for each episode, and topics have included top AI breakthroughs, nuclear winter, climate change, effective altruism, the UN nuclear ban, and the challenge of value alignment.

You can listen to the podcasts on Soundcloud, iTunes, and all other popular streaming platforms.

Timeline of Nuclear Close Calls

The most devastating military threat arguably comes from a nuclear war started not intentionally but by accident or miscalculation. Accidental nuclear war has almost happened many times already, and with 15,000 nuclear weapons worldwide — thousands on hair-trigger alert and ready to launch at a moment’s notice — an accident is bound to occur eventually.

To help concerned citizens understand the instability of the nuclear situation, FLI developed a timeline of all the instances where nuclear war was almost started by mistake.

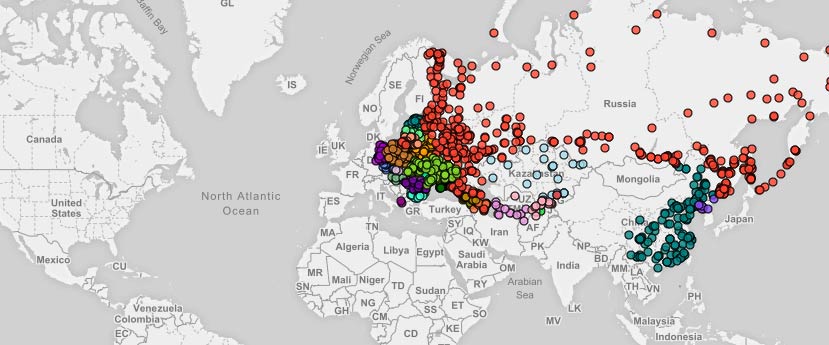

Declassified U.S. Nuclear Targets Map

The National Security Archives recently published a declassified list of U.S. nuclear targets from 1956, which spanned 1,100 locations across Eastern Europe, Russia, China, and North Korea.

To illustrate this for readers, FLI partnered with NukeMap to demonstrate how catastrophic a nuclear exchange between the United States and Russia could be. If you click detonate from any of the dots on the map, you can see how large an area would be destroyed by the bomb of your choice, as well as how many people could be killed. 150,000 people visited the map on the first day it launched, and hundreds of thousands of people have visited since.

MinutePhysics: Why You Should Care About Nukes

Henry Reich with MinutePhysics and FLI’s Max Tegmark got together to produce an entertaining video about just how scary nuclear weapons are, which reached over 1 million viewers. Nukes are a lot scarier than most people realize — as you might have picked up on if you’ve flipped through our nuclear accidents and close calls timeline. Happily there’s easy action you can take to help make the world a safer place. How?

Check out the video to learn more!

Nuclear Conference at MIT

FLI held a conference on reducing the threats of nuclear war at MIT, where Mayor Denise Simmons announced that the City of Cambridge had decided to divest $1 billion from nuclear weapons manufacturers. She said, “It’s my hope that this will inspire other municipalities, companies and individuals to look at their investments and make similar moves.”

We are thrilled with this success as Cambridge becomes the billion dollar investor in the U.S. to make such a move, joining over 50 European institutions.

2015

Open Letter on Autonomous Weapons

FLI worked with Stuart Russell, Toby Walsh and others to launch an open letter calling for a ban on offensive autonomous weapons. Walsh and Russell presented the letter at the International Joint Conference on Artificial Intelligence, making headlines around the world. By the end of the year, it had received over 22,000 signatures from scientists and citizens internationally, including over 3,000 AI and robotics researchers and 6 past presidents of the Association for the Advancement of Artificial Intelligence.

First AI Grants Competition

The Research Priorities Document became the basis for our beneficial AI research grant program. Shortly after creating it, we launched a search for grant recipients. Over 300 PIs and research groups applied for funding to be part of the new field of AI safety research, and on July 1, we announced the 37 AI safety research teams who would be awarded a total of $7 million for the first round of research.

Puerto Rico Conference on Beneficial AI

At the end of 2015, a Washington Post article described 2015 as the year the beneficial AI movement went mainstream. Only 360 days earlier, at the very start of the year, we were hosting the inaugural Puerto Rico Conference that helped launch that mainstreaming process. The goal of the conference was to bring more attention to the problems advanced artificial intelligence might bring and to galvanize the world’s talent to solve them.

The three big results of the conference were:

1) Worldwide attention to our Beneficial AI Open Letter, which garnered over 8600 signatures from some of the most influential AI researchers, some of the most influential scientists in other fields, and thousands of other AI researchers and scientists from around the world.

2) The creation of our Research Priorities Document, which became the basis for our beneficial AI research grant program.

3) Elon Musk’s announcement of a $10 million donation to help fund the grants.