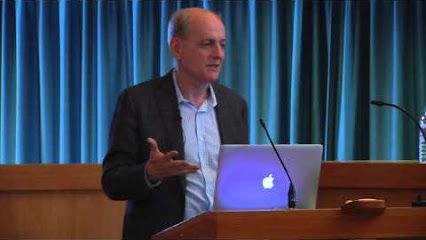

Stuart Russell on the long-term future of AI

Contents

Professor Stuart Russell recently gave a public lecture on The Long-Term Future of (Artificial) Intelligence, hosted by the Center for the Study of Existential Risk in Cambridge, UK. In this talk, he discusses key research problems in keeping future AI beneficial, such as containment and value alignment, and addresses many common misconceptions about the risks from AI.

“The news media in recent months have been full of dire warnings about the risk that AI poses to the human race, coming from well-known figures such as Stephen Hawking, Elon Musk, and Bill Gates. Should we be concerned? If so, what can we do about it? While some in the mainstream AI community dismiss these concerns, I will argue instead that a fundamental reorientation of the field is required.”

|

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI