Contents

FLI March 2021 Newsletter

We’re Hiring!

The Future of Life Institute is hiring for a Director of European Policy, Policy Advocate, and Policy Researcher.

The Director of European Policy will be responsible for leading and managing FLI’s European-based policy and advocacy efforts on both lethal autonomous weapon systems and on artificial intelligence.

The Policy Advocate will be responsible for supporting FLI’s ongoing policy work and advocacy in the U.S. government, especially (but not exclusively) at a federal level. They will be focused primarily on influencing near-term policymaking on artificial intelligence to maximise the societal benefits of increasingly powerful AI systems. Additional policy areas of interest may include synthetic biology, nuclear weapons policy, and the general management of global catastrophic and existential risk.

The Policy Researcher will be responsible for supporting FLI’s ongoing policy work in a wide array of governance for through the production of thoughtful, practical policy research. In this role, this position will be focused primarily on researching near-term policymaking on artificial intelligence to maximise the societal benefits of increasingly powerful AI systems. Additional policy areas of interest may include lethal autonomous weapon systems, synthetic biology, nuclear weapons policy, and the general management of global catastrophic and existential risk.

The positions are remote, though from varying locations, and pay is negotiable, competitive, and commensurate with experience.

Applications are now rolling until the positions are filled.

For further information about the roles and how to apply, click here.

Policy & Outreach Efforts

FLI Relaunches autonomousweapons.org

We are pleased to announce that thanks to the brilliant efforts of Emilia Javorsky and Anna Yelizarova, we have now relaunched autonomousweapons.org. This site is intended as a comprehensive educational resource where anyone can go to learn about lethal autonomous weapon systems; weapons that can identify, select and target individuals without human intervention.

Lethal autonomous weapons are not the stuff of science fiction, nor do they look like anything like the Terminator; they are already here in the form of unmanned aerial vehicles, vessels, and tanks. As the United States, United Kingdom, Russia, China, Israel and South Korea all race to develop and deploy them en masse, the need for international regulation to maintain meaningful human control over the use of lethal force has become ever more pressing.

Using autonomousweapons.org, you can read up on the global debate surrounding these emerging systems, the risks – from the potential for violations of international humanitarian law and algorithmic bias in facial recognition technologies to their being the ideal weapon for terror and assassination – the policy options and how you can get involved.

Nominate an Unsung Hero for the 2021 Future of Life Award!

Nominate an Unsung Hero for the 2021 Future of Life Award!

We’re excited to share that we’re accepting nominations for the 2021 Future of Life Award!

The Future of Life Award is given to an individual who, without having received much recognition at the time, has helped make today dramatically better than it may otherwise have been.

The first two recipients, Vasili Arkhipov and Stanislav Petrov, made judgements that likely prevented a full-scale nuclear war between the U.S. and U.S.S.R. In 1962, amid the Cuban Missile Crisis, Arkhipov, stationed aboard a Soviet submarine headed for Cuba, refused to give his consent for the launch of a nuclear torpedo when the captain became convinced that war had broken out. In 1983, Petrov decided not to act on an early-warning detection system that had erroneously indicated five incoming US nuclear missiles. We know today that a global nuclear war would cause a nuclear winter, possibly bringing about the permanent collapse of civilisation, if not human extinction. The third recipient, Matthew Meselson, was the driving force behind the 1972 Biological Weapons Convention. Having been ratified by 183 countries, the treaty is credited with preventing biological weapons from ever entering into mainstream use. The 2020 winners, William Foege and Viktor Zhdanov, made critical contributions towards the eradication of smallpox. Foege pioneered the public health strategy of ‘ring vaccination’ and surveillance while Zhdanov, the Deputy Minister of Health for the Soviet Union at the time, convinced the WHO to launch and fund a global eradication programme. Smallpox is thought to have killed 500 million people in its last century and its eradication in 1980 is estimated to have saved 200 million lives so far.

The Award is intended not only to celebrate humanity’s unsung heroes, but to foster a dialogue about the existential risks we face. We also hope that by raising the profile of individuals worth emulating, the Award will contribute to the development of desirable behavioural norms.

If you know of someone who has performed an incredible act of service to humanity but been overlooked by history, nominate them for the 2021 Award. This person may have made a critical contribution to a piece of groundbreaking research, set an important legal precedent, or perhaps alerted the world to a looming crisis; we’re open to suggestions! If your nominee wins, you’ll receive $3,000 from FLI as a token of our gratitude.

New Podcast Episodes

Roman Yampolskiy on the Uncontrollability, Incomprehensibility, and Unexplainability of AI

Roman Yampolskiy on the Uncontrollability, Incomprehensibility, and Unexplainability of AI

In this episode of AI Alignment Podcast, Roman Yampolskiy, Professor of Computer Science at the University of Louisville, joins us to discuss whether we can control, comprehend, and explain AI systems, and how this constrains the project of AI safety.

Among other topics, Roman discusses the need for impossibility results within computer science, the halting problem, and his research findings on AI explainability, comprehensibility, and controllability, as well as how these facets relate to each other and to AI alignment.

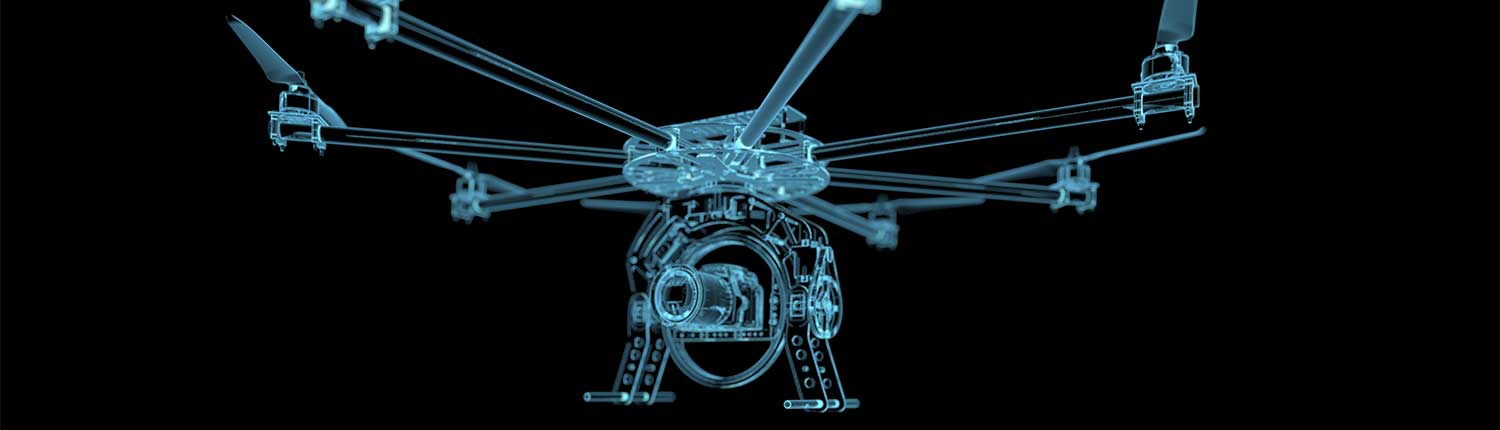

Stuart Russell and Zachary Kallenborn on Drone Swarms and the Riskiest Aspects of Lethal Autonomous Weapons

Stuart Russell and Zachary Kallenborn on Drone Swarms and the Riskiest Aspects of Lethal Autonomous Weapons

In this episode of the Future of Life Podcast, we are joined by Stuart Russell, Professor of Computer Science at the University of California, Berkeley, and Zachary Kallenborn, self-described “analyst in horrible ways people kill each other” and drone swarms expert, to discuss the highest risk aspects of lethal autonomous weapons.

Stuart and Zachary cover a wide range of topics, including the potential for drone swarms to become weapons of mass destruction, as well as how they could be used to deploy biological, chemical and radiological weapons, the risks of rapid escalation of conflict, unpredictability and proliferation, and how the regulation of lethal autonomous weapons could set a precedent for future AI governance.

To learn more about lethal autonomous weapons, visit autonomousweapons.org.

Reading & Resources

Max Tegmark on the INTO THE IMPOSSIBLE Podcast

Max Tegmark joined Dr. Brian Keating on the INTO THE IMPOSSIBLE podcast to discuss questions such as whether we can grow our prosperity through automation without leaving people lacking income or purpose, how we can make future artificial intelligence systems more robust such that they do what we want without crashing, malfunctioning or getting hacked, and whether we should fear an arms race in lethal autonomous weapons.

How easy would it be to snuff out humanity?

“If you play Russian roulette with one or two bullets in the cylinder, you are more likely to survive than not, but the stakes would need to be astonishingly high – or the value you place on your life inordinately low – for this to be a wise gamble.”

Read this fantastic overview of the existential and global catastrophic risks humanity currently faces by Lord Martin Rees, Astronomer Royal and Co-founder of the Centre for the Study of Existential Risk, University of Cambridge.

Disease outbreaks more likely in deforestation areas, study finds

Disease outbreaks more likely in deforestation areas, study finds

“Diseases are filtered and blocked by a range of predators and habitats in a healthy, biodiverse forest. When this is replaced by a palm oil plantation, soy fields or blocks of eucalyptus, the specialist species die off, leaving generalists such as rats and mosquitoes to thrive and spread pathogens across human and non-human habitats.”

A new study suggests that epidemics are likely to increase as a result of environmental destruction, in particular, deforestation and monoculture plantations.

Boris Johnson is playing a dangerous nuclear game

Boris Johnson is playing a dangerous nuclear game

“By deciding to increase the cap, the UK – the world’s third country to develop its own nuclear capability – is sending the wrong signal: rearm. Instead, the world should be headed to the negotiating table to breathe new life into the arms control talks…The UK could play an important role in stopping the new nuclear arms race, instead of restarting it.”

A useful analysis by Professor of History at Harvard University Serhii Plokhy on how Prime Minister Boris Johnson may fuel a nuclear arms race by increasing the United Kingdom’s nuclear stockpile by 40%.