From the WP: How do you teach a machine to be moral?

Contents

In case you missed it…

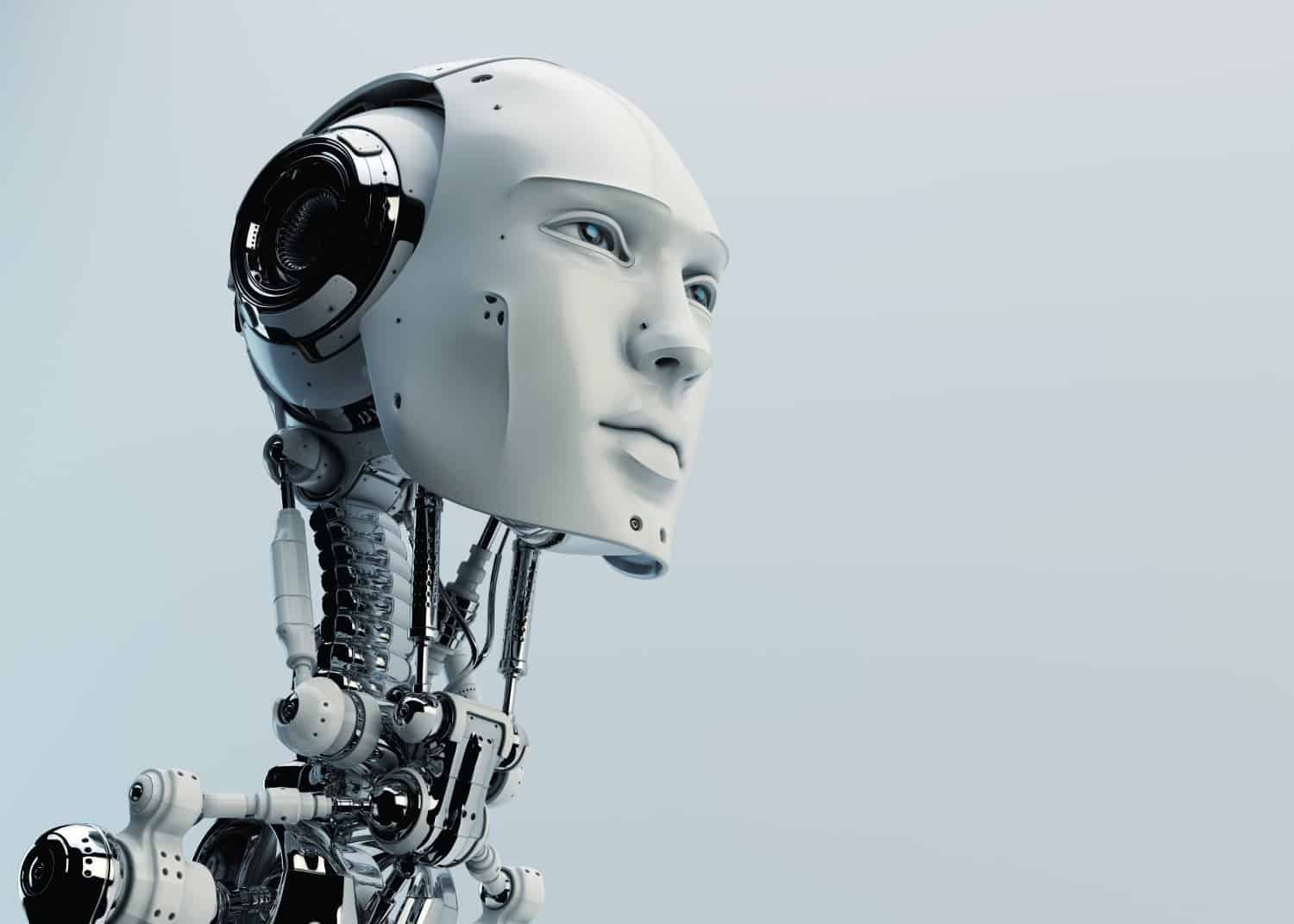

Francesca Rossi, member of the FLI scientific advisory board and one of 37 recipients of the AI safety research program, recently wrote an article for the Washington Post in which she describes the challenges associated with building an artificial intelligence that has the same ethics and morals as people. In the article, she highlights her work, which includes a team of not just AI researchers, but also philosophers and psychologists, who are working together to teach AI to be both trustworthy and trusted by the people it will work with.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

Statement from Max Tegmark on the Department of War’s ultimatum

The U.S. Public Wants Regulation (or Prohibition) of Expert‑Level and Superhuman AI