FLI’s President and CEO on Trump’s support for an AI ‘kill switch’

Contents

President Trump said during an interview aired yesterday by Fox Business that “there should be” when asked if AI needs safeguards or a ‘kill switch.’ A full statement from FLI President and CEO Anthony Aguirre is below.

Key Context:

- Anthropic’s Mythos model has rocked the AI and cybersecurity world, demonstrating just how vulnerable the internet, our economy, and our society are to increasingly powerful AI systems.

- The UK AI Security Institute’s report describing their testing of Mythos showed “a step up over previous frontier models in a landscape where cyber performance was already rapidly improving.”

- The report also noted that Mythos was able to complete a “32-step corporate network attack simulation spanning initial reconnaissance through to full network takeover, which we estimate to require humans 20 hours to complete.”

- Meanwhile, an AI arms race is breaking out with countries developing ever more sophisticated AI weapons and AI-integrated weapons platforms.

Anthony Aguirre, President and CEO of the Future of Life Institute, issued the following statement in response to President Trump’s support of AI safeguards and a ‘kill switch’:

“President Trump is exactly right: advanced AI systems need a robust off-switch to ensure humans never lose control over them. Claude Mythos demonstrated just how vulnerable our economy and our financial system are to the offensive cyber capabilities of the latest AI systems, to say nothing of more capable systems to come. In pre-release testing, Mythos autonomously discovered zero-day vulnerabilities across every major operating system and web browser, including flaws that had survived decades of human review.

“Models like Mythos are nearly superhuman in their ability to find and exploit vulnerabilities in critical systems. If we go forward building highly superhuman AI – which FLI believes we should not – for any hope of control we cannot rely on software-based safety measures alone. We need the ability to manage these systems at the hardware level. This is entirely feasible. The same sort of hardware security measures that keep your face private and allow you to remotely shut down your iPhone exist on AI chips, and can be used to contain AI models and discontinue their operation if needed. FLI has prototyped these capabilities itself, and if we can do it then NVIDIA AI developers surely can as well, at scale, and with increasing robustness in new generations of hardware.“

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

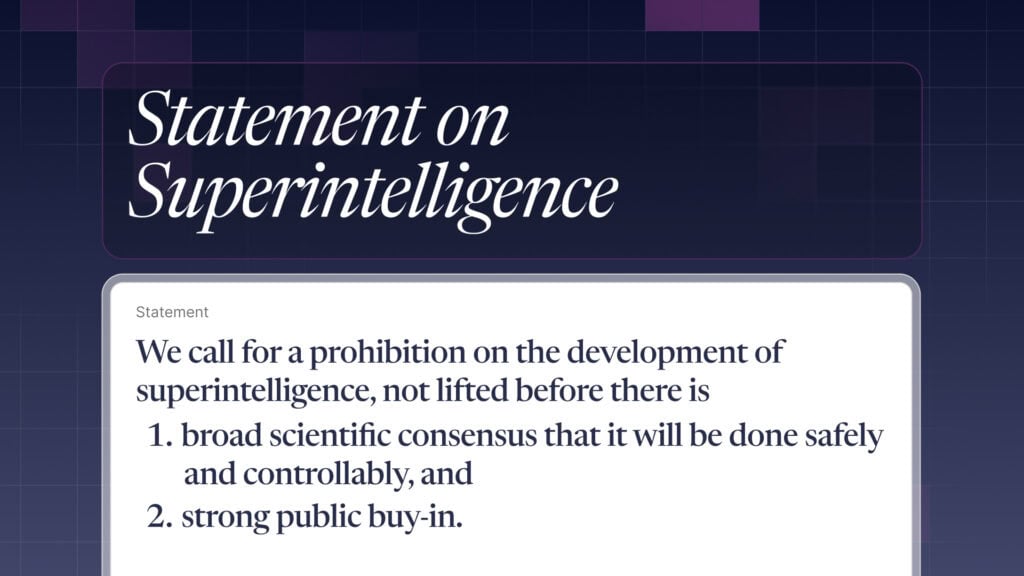

Other posts about Statement

FLI CEO’s statement on the attack against Sam Altman’s home

Statement: Head of US Policy on the White House AI legislative recommendations

Statement from Max Tegmark on the Department of War’s ultimatum

Michael Kleinman reacts to breakthrough AI safety legislation

Some of our Communications projects

Control Inversion