Prominent Scientists, Faith Leaders, Policymakers and Artists Call for a Prohibition on Superintelligence, as Poll Shows Americans Don’t Want It

Contents

Contact: Chase Hardin, chase@futureoflife.org

Campbell, CA – Today, the Future of Life Institute (FLI) launched an initiative signed by an unprecedented and politically diverse coalition of world-renowned AI scientists, faith leaders, policymakers, actors and other prominent voices. The initiative calls for a prohibition on the development of superintelligence until the technology is reliably safe and controllable, and has public buy-in – which it sorely lacks, according to a new poll.

“Frontier AI systems could surpass most individuals across most cognitive tasks within just a few years. These advances could unlock solutions to major global challenges, but they also carry significant risks. To safely advance toward superintelligence, we must scientifically determine how to design AI systems that are fundamentally incapable of harming people, whether through misalignment or malicious use. We also need to make sure the public has a much stronger say in decisions that will shape our collective future,” said Yoshua Bengio, the world’s most cited AI scientist and Professor at Université de Montréal.

The statement is signed by a remarkably broad coalition representing multiple perspectives and disciplines: Nobel Laureates, Turing Award winners, leading AI researchers, national security experts, religious leaders, authors, and cultural figures. Notable signatories include national security figures such as Mike Mullen, U.S. Navy Admiral (retired), Chairman of the Joint Chiefs of Staff under Presidents George W. Bush and Barack Obama; evangelical leaders Johnnie Moore and Walter Kim; Papal AI Advisor Paolo Benanti; artists Joseph Gordon-Levitt and will.i.am; digital safety advocates Prince Harry and Meghan, Duke and Duchess of Sussex; AI pioneers Geoffrey Hinton and Stuart Russell; Nobel Laureates Beatrice Fihn, Frank Wilczek, John C. Mather, and Daron Acemoğlu; and business trailblazers Richard Branson and Steve Wozniak.

“To get the most from what AI has to offer mankind, there is simply no need to reach for the unknowable and highly risky goal of superintelligence, which is by far a frontier too far. By definition this would result in a power that we could neither understand nor control,” said actor Stephen Fry.

FLI has concurrently released a national U.S. poll which reveals widespread discontent about the trajectory of AI (only 5% support the current status quo of unregulated development), overwhelming support for robust regulation (73%) and the view that superintelligence should not be developed until there is scientific agreement that it is safe and controllable (64%). Full results are here.

“95% of Americans don’t want a race to superintelligence, and experts want to ban it,” said Future of Life President Max Tegmark, a professor doing AI research at MIT.

Superintelligence is defined as artificial intelligence capable of outperforming all humans at most cognitive tasks. Leading AI experts believe that such systems are less than ten years away, and they warn that we do not know how to control superintelligence were it to be created. Rather than slowing down AI development, pre-eminent figures are instead calling for secure innovation through controllable AI tools that solve specific problems in health, energy, education, and beyond.

“Many people want powerful AI tools for science, medicine, productivity, and other benefits. But the path AI corporations are taking, of racing toward smarter-than-human AI that is designed to replace people, is wildly out of step with what the public wants, scientists think is safe, or religious leaders feel is right,” said Anthony Aguirre, co-founder and Executive Director at the Future of Life Institute. “Nobody developing these AI systems has been asking humanity if this is OK. We did – and they think it’s unacceptable.”

The statement and poll reflect a growing global consensus: while AI holds extraordinary potential for progress and prosperity, rushing toward superintelligence without safeguards could have catastrophic consequences. This rapid pursuit raises serious concerns, beginning with economic displacement and disempowerment, loss of freedoms and civil liberties, erosion of dignity and human control, national security risks, and even the possibility of human extinction. The statement underscores that AI tools could be used to fuel a pro-human AI renaissance which fosters human flourishing and helps solve our most pressing challenges. Instead, tech companies currently pursue potentially dangerous technology without guardrails, oversight, or broad public consent.

The full statement reads: “We call for a prohibition on the development of superintelligence, not lifted before there is 1) broad scientific consensus that it will be done safely and controllably, and 2) strong public buy-in.”

The full list of signatories are available at https://superintelligence-statement.org.

Additional quotes from statement signatories:

Prince Harry, Duke of Sussex: “The future of AI should serve humanity, not replace it. The true test of progress will be not how fast we move, but how wisely we steer.”

Mary Robinson: “AI offers extraordinary promise to advance human rights, tackle inequality, and protect our planet, but the pursuit of superintelligence threatens to undermine the very foundations of our common humanity. We must act with both ambition and responsibility by choosing the path of human-centred AI that serves dignity and justice.”

Johnnie Moore: “We should rapidly develop powerful AI tools that help cure diseases and solve practical problems, but not autonomous smarter-than-human machines that nobody knows how to control. Creating superintelligent machines is not only unacceptably dangerous and immoral, but also completely unnecessary.”

Joseph Gordon-Levitt: “Yeah, we want specific AI tools that can help cure diseases, strengthen national security, etc. But does AI also need to imitate humans, groom our kids, turn us all into slop junkies and make zillions of dollars serving ads? Most people don’t want that. But that’s what these big tech companies mean when they talk about building ‘Superintelligence’.”

Stuart Russell: “This is not a ban or even a moratorium in the usual sense. It’s simply a proposal to require adequate safety measures for a technology that, according to its developers, has a significant chance to cause human extinction. Is that too much to ask?”

Walter Kim: “If we race to build superintelligence without clear and morally informed parameters, we risk undermining the incredible potential AI has to alleviate suffering and enable flourishing. We should intentionally harness this amazing technology to help people, not rush to build machines and mechanisms we cannot control.”

Yuval Noah Harari: “Superintelligence would likely break the very operating system of human civilization – and is completely unnecessary. If we instead focus on building controllable AI tools to help real people today, we can far more reliably and safely realize AI’s incredible benefits.”

Prince Harry, Duke of Sussex: “The future of AI should serve humanity, not replace it. I believe the true test of progress will be not how fast we move, but how wisely we steer. There is no second chance.”

Jon Wolfsthal, Special Assistant to the President and Senior Director at the National Security Council: The discussion over AGI should not be cast as a struggle between so called doomers and optimists. AGI presents a common challenge for all of humanity. We must to ensure we control technology and it does not control us. Until and unless developers and their funders know that a technology with the capacity to be smarter, faster, stronger and just as lethal as humanity cannot escape human control, it must not be unleashed. Ensuring we can enjoy the benefits of AI and AGI requires us to be responsible in its development.

Mark Beall: When AI researchers warn of extinction and tech leaders build doomsday bunkers, prudence demands we listen. Superintelligence without proper safeguards could be the ultimate expression of human hubris—power without moral restraint.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about Press release

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

“This is What it Means to be Pro-Human” Declares Broad Coalition of Conservative, Progressive, and Civil Society Groups in Statement of Shared Principles on AI

Future of Life Institute Launches Multimillion Dollar Nationwide AI Regulation Campaign

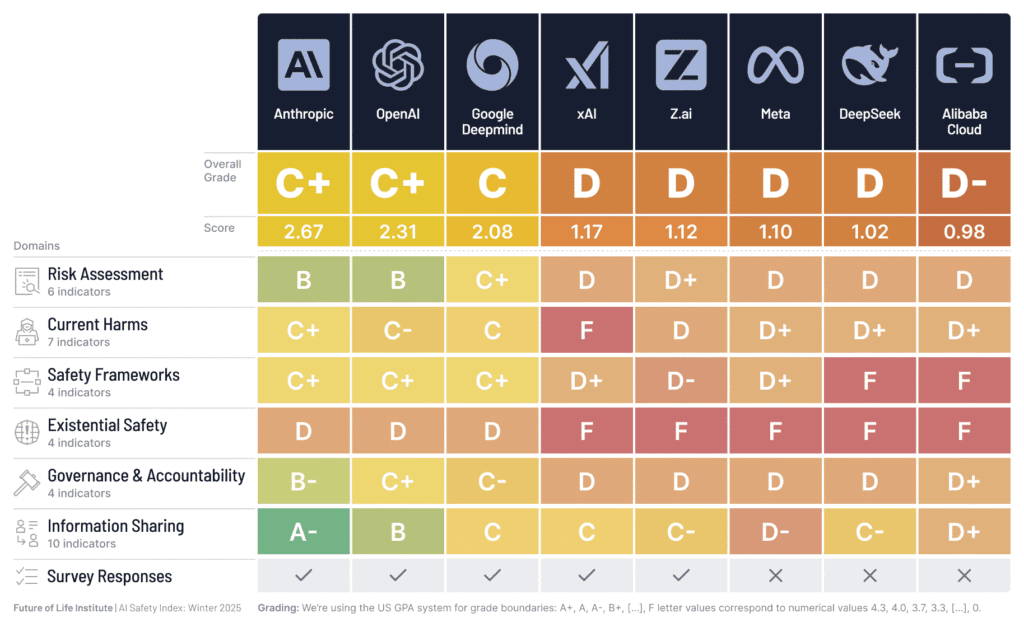

AI Company Safety Practices Fall Short of Public Commitments and Show Structural Weaknesses, as Top Performers Widen the Gap

Some of our Communications projects

Control Inversion