Max Tegmark on AGI Manhattan Project

Contents

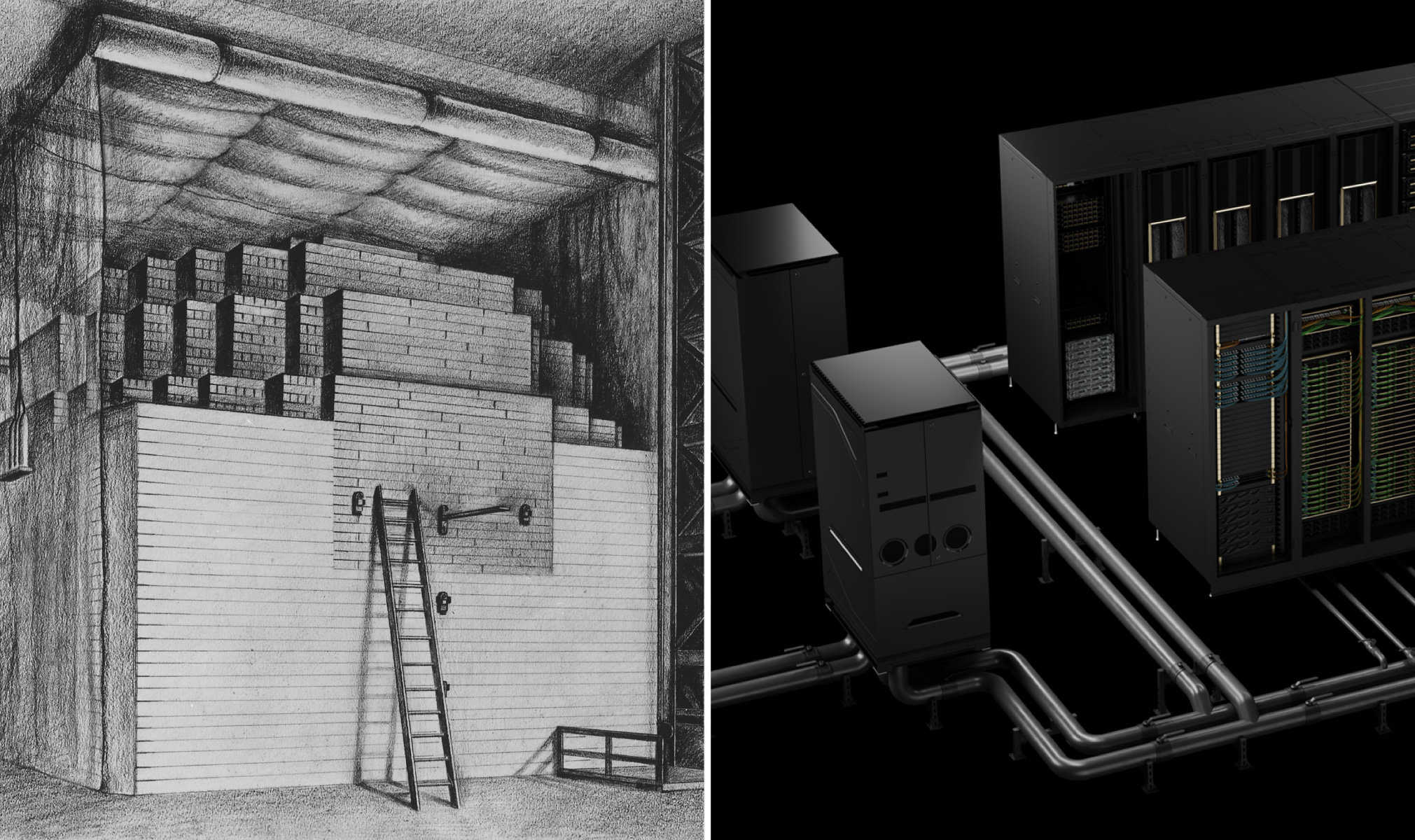

A new report by the US-China Economic and Security Review Commission recommends that “Congress establish and fund a Manhattan Project-like program dedicated to racing to and acquiring an Artificial General Intelligence (AGI) capability”.

An AGI race is a suicide race. The proposed AGI Manhattan project, and the fundamental misunderstanding that underpins it, represents an insidious growing threat to US national security. Any system better than humans at general cognition and problem solving would by definition be better than humans at AI research and development, and therefore able to improve and replicate itself at a terrifying rate. The world’s pre-eminent AI experts agree that we have no way to predict or control such a system, and no reliable way to align its goals and values with our own. This is why the CEOs of OpenAI, Anthropic and Google DeepMind joined a who’s who of top AI researchers last year to warn that AGI could cause human extinction. Selling AGI as a boon to national security flies in the face of scientific consensus. Calling it a threat to national security is a remarkable understatement.

AGI advocates disingenuously dangle benefits such as disease and poverty reduction, but the report reveals a deeper motivation: the false hope that it will grant its creator power. In fact, the race with China to first build AGI can be characterized as a “hopium war” – fueled by the delusional hope that it can be controlled.

In a competitive race, there will be no opportunity to solve the unsolved technical problems of control and alignment, and every incentive to cede decisions and power to the AI itself. The almost inevitable result would be an intelligence far greater than our own that is not only inherently uncontrollable, but could itself be in charge of the very systems that keep the United States secure and prosperous. Our critical infrastructure – including nuclear and financial systems – would have little protection against such a system. As AI Nobel Laureate Geoff Hinton said last month “Once the artificial intelligences get smarter than we are, they will take control.“

The report is committing scientific fraud by suggesting AGI is almost certainly controllable. More generally, the claim that such a project is in the interest of “national security” disingenuously misrepresents the science and implications of this transformative technology, as evidenced by technical confusions in the report itself – which appears to have been without much input from AI experts. The U.S. should reliably strengthen national security not by losing control of AGI, but by building game-changing Tool AI that strengthens its industry, science, education, healthcare, and defence, and in doing so reinforce U.S leadership for generations to come.

Max Tegmark

President, Future of Life Institute

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Statement from Max Tegmark on the Department of War’s ultimatum

Michael Kleinman reacts to breakthrough AI safety legislation

Context and Agenda for the 2025 AI Action Summit