Google DeepMind Falls Behind OpenAI in Latest Safety Review; All AI Companies Still Falling Short, Say Experts

Contents

Media Contact: Chase Hardin, chase@futureoflife.org, +1 (623) 986-0161

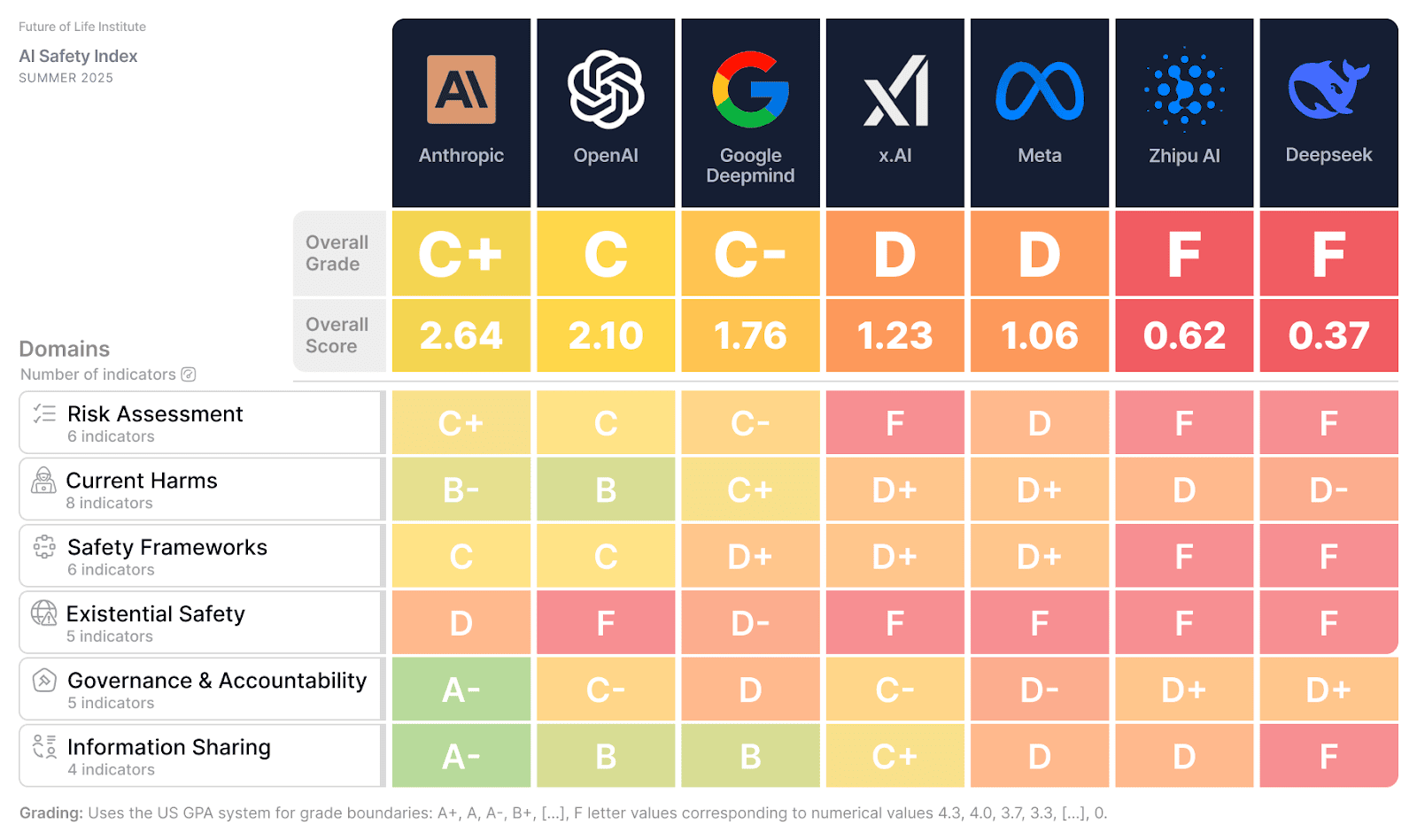

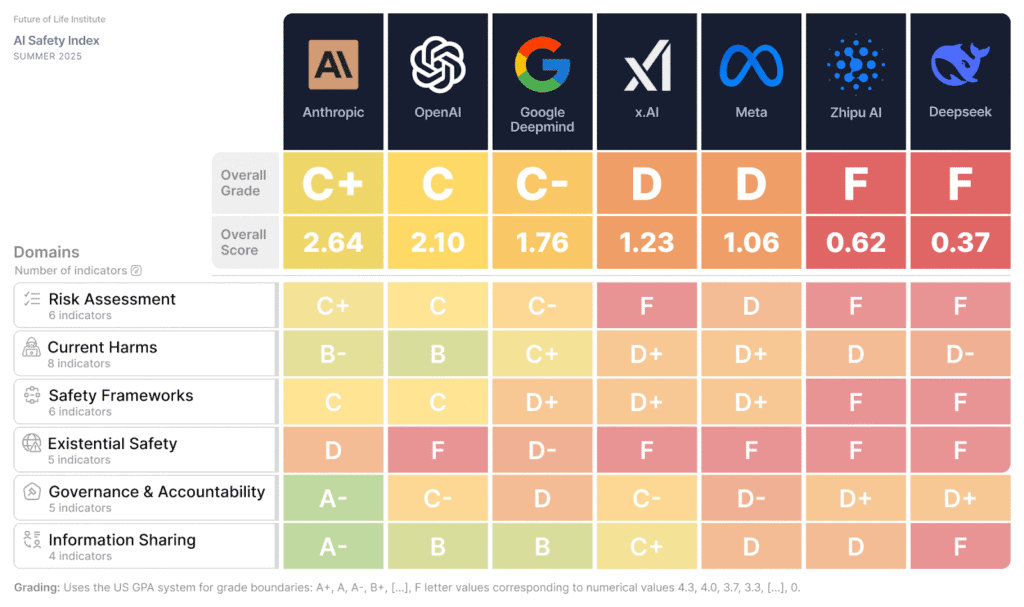

CAMPBELL, CA — Today, the Future of Life Institute (FLI) released the Summer 2025 edition of its AI Safety Index, in which leading experts in artificial intelligence and public policy evaluated the safety practices of top AI companies Anthropic, Google DeepMind, Meta, OpenAI, x.AI, Deepseek and Zhipu AI. The expert review panel assessed each organization across six core dimensions: Risk Assessment, Current Harms, Safety Frameworks, Existential Safety, Governance, and Information Sharing.

While some companies like OpenAI and Anthropic made progress in specific areas, such as external assessment of their safety frameworks, the overall results of the index reveal deep inconsistencies and critical shortfalls in how AI companies are addressing the growing risks posed by advanced AI systems. Notably, reviewers emphasized that no company has a robust strategy for ensuring meaningful control over the systems they’re creating or even effectively determining how much risk they pose.

“Some companies are making token efforts, but none are doing enough,” said Stuart Russell, OBE, Professor of Computer Science at UC Berkeley. “We are spending hundreds of billions of dollars to create superintelligent AI systems over which we will inevitably lose control. We need a fundamental rethink of how we approach AI safety. This is not a problem for the distant future; it’s a problem for today.”

The final report is available here. It includes safety framework grades from a complementary report produced by SaferAI, released on the same day and viewable here.

This is the second iteration of FLI’s AI Safety Index, which was first published in December of 2024. Since then, OpenAI overtook Google DeepMind in the rankings partly by improving their transparency, publicly posting a whistleblower policy, and sharing company information for this Index. Technical capabilities have also grown dramatically since December. Several newly released systems have demonstrated astonishing capability leaps including GPT 4.5, o3, DeepSeek R1, Gemini 2.5, Claude 4 and Grok 4. Some have achieved impressive scores on Humanity’s Last Exam and the ARC-AGI test. However, many of those same systems have also demonstrated the capability to lie to and blackmail their programmers, cheat at various tasks, purposely hide their tendencies when tested, and even make copies of themselves to avoid being replaced or switched off.

Chinese AI firms Zhipu.AI and Deepseek both received failing overall grades. However, the report scores companies on norms such as self-governance and information-sharing, which are far less prominent in Chinese corporate culture. Furthermore, as China already has regulations for advanced AI development, there is less reliance on AI safety self-governance. This is in contrast to the United States and United Kingdom, where the other companies are based, and which have, as yet, passed no such regulation on frontier AI. Note: the scoring was completed in early July and does not reflect recent events such as xAI’s Grok4 release, Meta’s superintelligence announcement or OpenAI’s commitment to sign the EU AI Act Code of Practice.

“These findings reveal that self-regulation simply isn’t working, and that the only solution is legally binding safety standards like we have for medicine, food and airplanes,” said Max Tegmark, MIT professor and President of the Future of Life Institute. “It’s pretty crazy that companies still oppose regulation while claiming they’re just years away from superintelligence.”

Grades were based on publicly available documents and each company’s responses to a survey sent by FLI. A major concern highlighted by the panel was the degree to which competitive dynamics appear to be driving companies to deprioritize safety — or define it too narrowly — in pursuit of performance and market advantage.

Review panelists:

Dylan Hadfield-Menell: Dylan Hadfield-Menell is the Bonnie and Marty (1964) Tenenbaum Career Development Assistant Professor at MIT, where he leads the Algorithmic Alignment Group at the Computer Science and Artificial Intelligence Laboratory (CSAIL). A Schmidt Sciences AI2050 Early Career Fellow, his research focuses on safe and trustworthy AI deployment, with particular emphasis on multi-agent systems, human-AI teams, and societal oversight of machine learning.

David Krueger: David Krueger is an Assistant Professor in Robust, Reasoning and Responsible AI in the Department of Computer Science and Operations Research (DIRO) at University of Montreal, and a Core Academic Member at Mila, UC Berkeley’s Center for Human-Compatible AI, and the Center for the Study of Existential Risk. His work focuses on reducing the risk of human extinction from artificial intelligence through technical research as well as education, outreach, governance and advocacy.

Tegan Maharaj: Tegan Maharaj is an Assistant Professor in the Department of Decision Sciences at HEC Montréal, where she leads the ERRATA lab on Ecological Risk and Responsible AI. She is also a core academic member at Mila. Her research focuses on advancing the science and techniques of responsible AI development. Previously, she served as an Assistant Professor of Machine Learning at the University of Toronto.

Jessica Newman: Jessica Newman is the Director of the AI Security Initiative (AISI), housed at the UC Berkeley Center for Long-Term Cybersecurity. She is also a Co-Director of the UC Berkeley AI Policy Hub. Newman’s research focuses on the governance, policy, and politics of AI, with particular attention on comparative analysis of national AI strategies and policies, and on mechanisms for the evaluation and accountability of organizational development and deployment of AI systems.

Sneha Revanur: Sneha Revanur is the founder and president of Encode, a global youth-led organization advocating for the ethical regulation of AI. Under her leadership, Encode has mobilized thousands of young people to address challenges like algorithmic bias and AI accountability. She was featured on TIME’s inaugural list of the 100 most influential people in AI.

Stuart Russell, OBE: Stuart Russell is a Professor of Computer Science at the University of California at Berkeley, holder of the Smith-Zadeh Chair in Engineering, and Director of the Center for Human-Compatible AI and the Kavli Center for Ethics, Science, and the Public. He is a recipient of the IJCAI Computers and Thought Award, the IJCAI Research Excellence Award, and the ACM Allen Newell Award. In 2021 he received the OBE from Her Majesty Queen Elizabeth and gave the BBC Reith Lectures. He co-authored the standard textbook for AI, which is used in over 1500 universities in 135 countries.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about Press release

Prominent Scientists, Faith Leaders, Policymakers and Artists Call for a Prohibition on Superintelligence, as Poll Shows Americans Don’t Want It

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

“This is What it Means to be Pro-Human” Declares Broad Coalition of Conservative, Progressive, and Civil Society Groups in Statement of Shared Principles on AI

Future of Life Institute Launches Multimillion Dollar Nationwide AI Regulation Campaign

Some of our Communications and Policy & Research projects

Control Inversion