AI Company Safety Practices Fall Short of Public Commitments and Show Structural Weaknesses, as Top Performers Widen the Gap

Contents

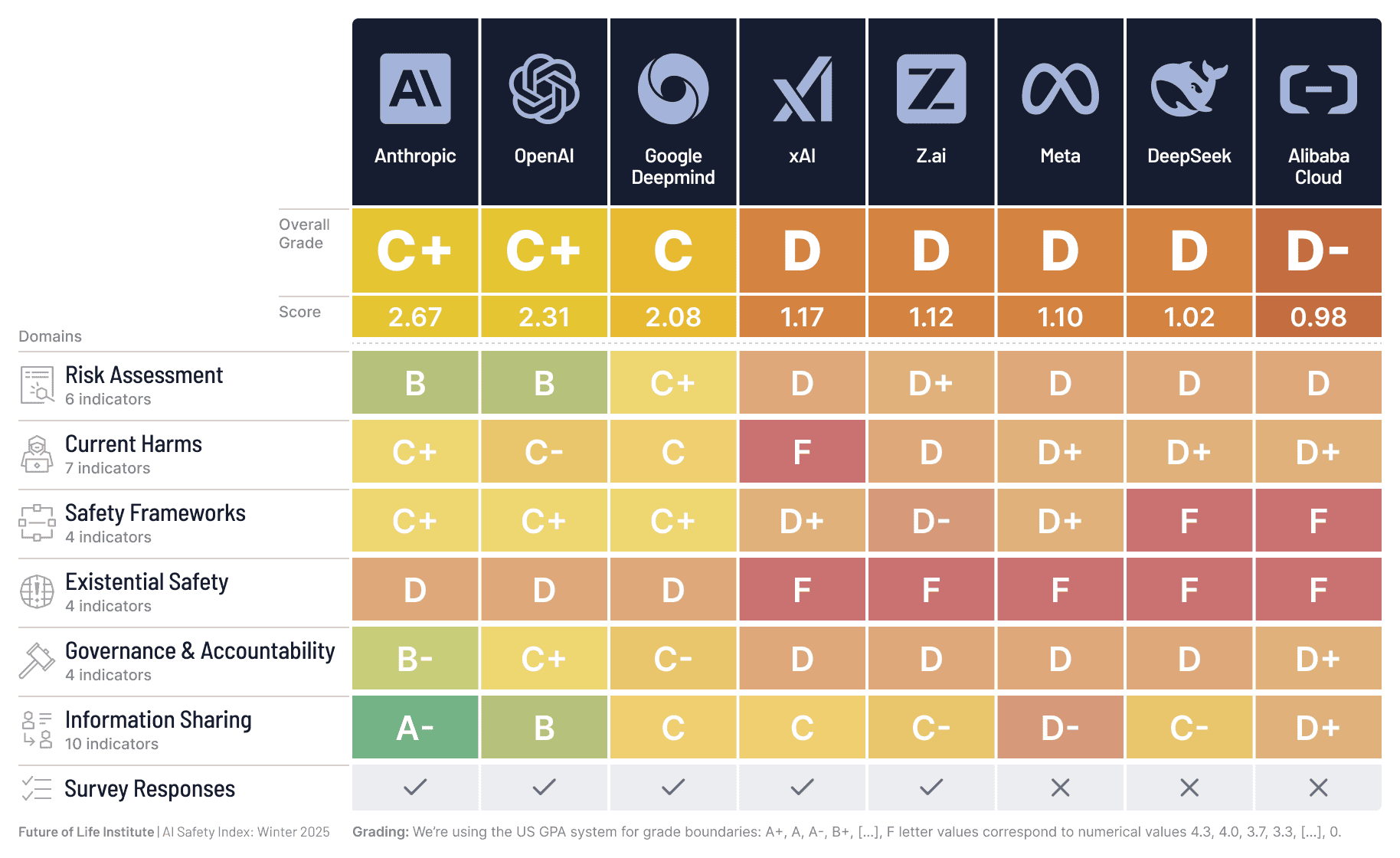

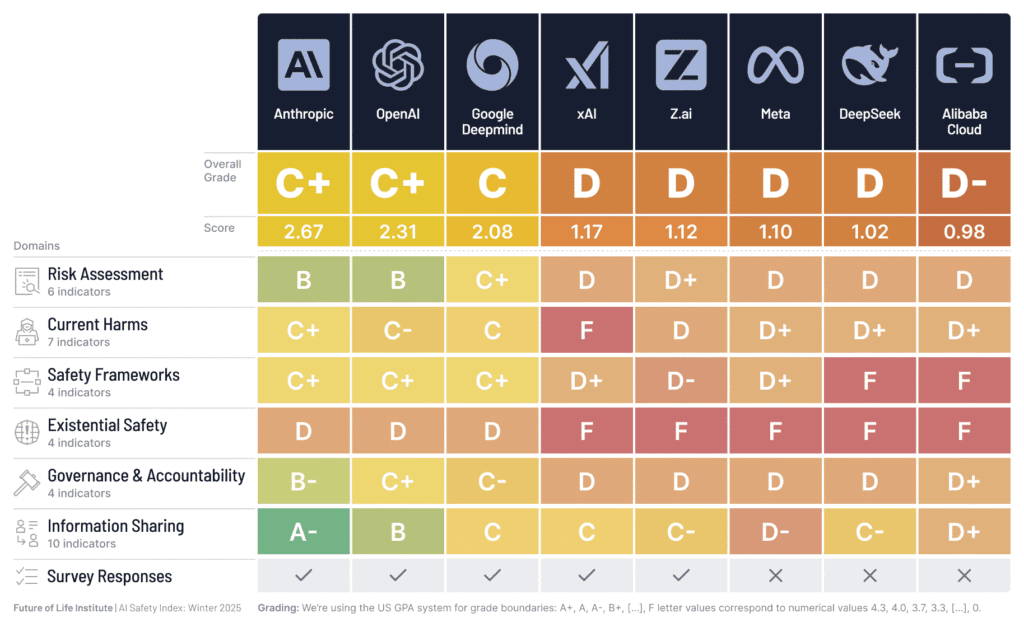

Campbell, CA – Today, the Future of Life Institute (FLI) released the 2025 winter edition of its AI Safety Index, for which an independent panel of leading experts evaluate the safety practices of the eight major AI companies globally. Dimensions assessed include Risk Assessment, Current Harms, Safety Frameworks, Existential Safety, Governance, and Information Sharing. Participating companies this year included Anthropic, OpenAI, Google DeepMind, xAI, Meta, Z.ai, DeepSeek, and Alibaba Cloud.

Despite public commitments, and incremental improvements in areas such as Risk Assessment, company safety practices continue to fall far short of emerging global standards. This is primarily due to the uneven depth, specificity, and quality of implementation.

“Despite recent uproar over AI-powered hacking and AI driving people to psychosis and self-harm, US AI companies remain less regulated than restaurants and continue lobbying against binding safety standards,” said MIT Professor Max Tegmark, FLI’s President.

This shortfall is particularly alarming given recent leaps in capability, with some systems now reasoning at Ph.D-level in hard sciences and others winning gold medals in the International Math Olympiad. These technical advancements are already manifesting real world threats, such as the AI-orchestrated large-scale cyber espionage campaign Anthropic revealed in September.

“AI CEOs claim they know how to build superhuman AI, yet none can show how they’ll prevent us from losing control – after which humanity’s survival is no longer in our hands,” writes Prof. Stuart Russell, Professor of Computer Science at the UC Berkeley. “I’m looking for proof that they can reduce the annual risk of control loss to one in a hundred million, in line with nuclear reactor requirements. Instead, they admit the risk could be one in ten, one in five, even one in three, and they can neither justify nor improve those numbers.”

Reviewers found that, despite all of the companies explicitly racing to develop AGI/superintelligence, none of them had a robust strategy for controlling such smarter-than-human systems. The industry’s core structural weakness is leaving this risk unaddressed, even as the AI company leaders themselves admit the creation of this technology could have catastrophic outcomes, including human extinction. Just last month, the CEO of Z.ai (one of the companies evaluated in the Index) signed a statement – along with 120,000+ other AI experts, politicians, religious leaders, artists and concerned citizens – calling for a ban on superintelligence until it is controllable and has public support.

The Index also provides company-specific recommendations on how to improve safety practices and their resulting scores. Current Harms scores were considerably down in comparison to previous editions, for example, in no small part due to recent horrific incidents involving ChatGPT, and reviewers recommended that OpenAI “increase efforts to prevent AI psychosis and suicide, and act less adversarially toward alleged victims.”

“If we’d been told in 2016 that the largest tech companies in the world would run chatbots that enact pervasive digital surveillance, encourage kids to kill themselves, and produce documented psychosis in long-term users, it would have sounded like a paranoid fever dream. Yet we are being told not to worry.” said Prof. Tegan Maharaj at HEC Montréal.

The findings also spotlight a clear and growing divide between the top performers (Anthropic, OpenAI and DeepMind) and stragglers (x.AI, Z.ai, Meta, Alibaba Cloud and DeepSeek). This gulf exists in the domains of risk assessment, safety framework, and information sharing, driven by limited disclosure, weak evidence of systematic safety processes, and uneven adoption of robust evaluation practices.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about Press release

Prominent Scientists, Faith Leaders, Policymakers and Artists Call for a Prohibition on Superintelligence, as Poll Shows Americans Don’t Want It

Governor DeSantis Directs Florida State Agencies to Partner with Future of Life Institute to Shield Families from AI Harm

“This is What it Means to be Pro-Human” Declares Broad Coalition of Conservative, Progressive, and Civil Society Groups in Statement of Shared Principles on AI

Future of Life Institute Launches Multimillion Dollar Nationwide AI Regulation Campaign

Some of our Communications and Policy & Research projects

Control Inversion