Miles Apart: Comparing key AI Act proposals

Contents

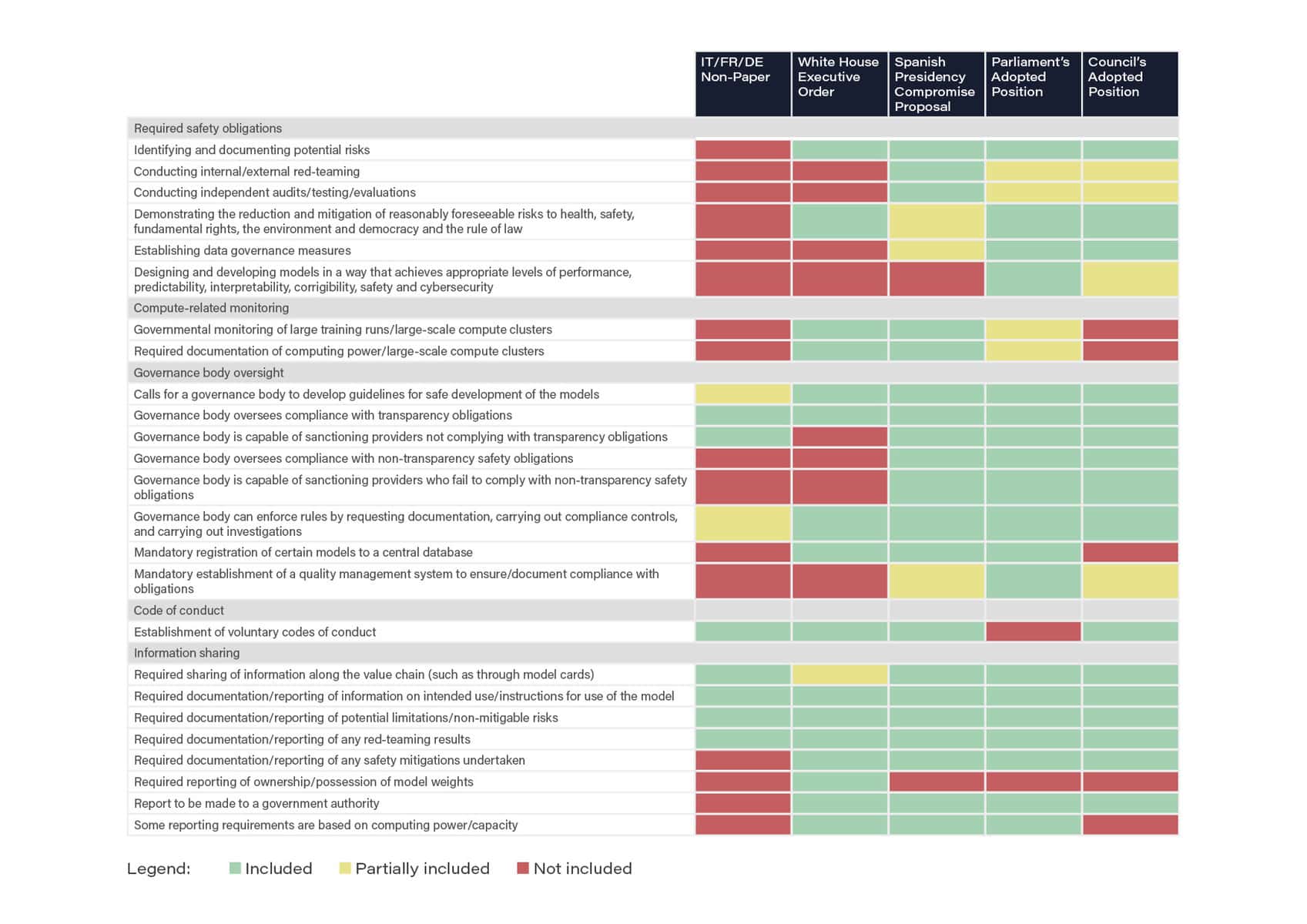

The table below provides an analysis of several transatlantic policy proposals on how to regulate the most advanced AI systems. The analysis shows that the recent non-paper circulated by Italy, France, and Germany (as reported by Euractiv) includes the fewest provisions with regards to foundation models or general purpose AI systems, even falling below the minimal standard that was set in a recent U.S. White House Executive Order.

While the non-paper proposes a voluntary code of conduct, it does not include any of the safety obligations required by previous proposals, including by the Council’s own adopted position. Moreover, the non-paper envisions a much lower level of oversight and enforcement than the Spanish Presidency’s compromise proposal and both the Parliament and Council’s adopted positions.

About the Future of Life Institute

The Future of Life Institute (FLI) is the world’s oldest and largest AI think tank, with a team of 35+ full-time staff operating across the US and Europe. FLI has been working to steer the development of transformative technologies towards benefitting life and away from extreme large-scale risks since its founding in 2014. Find out more about our mission or explore our work.

Related content

Other posts about AI Policy, AI Research

Michael Kleinman reacts to breakthrough AI safety legislation

Context and Agenda for the 2025 AI Action Summit

Paris AI Safety Breakfast #4: Rumman Chowdhury

AI Safety Index Released

Some of our Policy & Research projects

Control Inversion