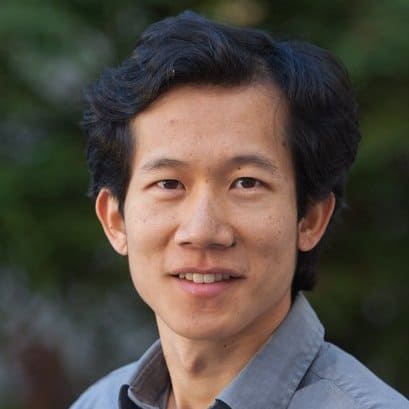

Transparent and Interpretable AI: an interview with Percy Liang

At the end of 2017, the United States House of Representatives passed a bill called the SELF DRIVE Act, laying out an initial federal framework for autonomous vehicle regulation. Autonomous cars have been undergoing testing on public roads for almost two decades. With the passing of this bill, along with the increasing safety benefits of autonomous vehicles, it is likely that they will become even more prevalent in our daily lives. This is true for numerous autonomous technologies including those in the medical, legal, and safety fields – just to name a few.

To that end, researchers, developers, and users alike must be able to have confidence in these types of technologies that rely heavily on artificial intelligence (AI). This extends beyond autonomous vehicles, applying to everything from security devices in your smart home to the personal assistant in your phone.

Predictability in Machine Learning

Percy Liang, Assistant Professor of Computer Science at Stanford University, explains that humans rely on some degree of predictability in their day-to-day interactions — both with other humans and automated systems (including, but not limited to, their cars). One way to create this predictability is by taking advantage of machine learning.

Machine learning deals with algorithms that allow an AI to “learn” based on data gathered from previous experiences. Developers do not need to write code that dictates each and every action or intention for the AI. Instead, the system recognizes patterns from its experiences and assumes the appropriate action based on that data. It is akin to the process of trial and error.

A key question often asked of machine learning systems in the research and testing environment is, “Why did the system make this prediction?” About this search for intention, Liang explains:

“If you’re crossing the road and a car comes toward you, you have a model of what the other human driver is going to do. But if the car is controlled by an AI, how should humans know how to behave?”

It is important to see that a system is performing well, but perhaps even more important is its ability to explain in easily understandable terms why it acted the way it did. Even if the system is not accurate, it must be explainable and predictable. For AI to be safely deployed, systems must rely on well-understood, realistic, and testable assumptions.

Current theories that explore the idea of reliable AI focus on fitting the observable outputs in the training data. However, as Liang explains, this could lead “to an autonomous driving system that performs well on validation tests but does not understand the human values underlying the desired outputs.”

Running multiple tests is important, of course. These types of simulations, explains Liang, “are good for debugging techniques — they allow us to more easily perform controlled experiments, and they allow for faster iteration.”

However, to really know whether a technique is effective, “there is no substitute for applying it to real life,” says Liang, “ this goes for language, vision, and robotics.” An autonomous vehicle may perform well in all testing conditions, but there is no way to accurately predict how it could perform in an unpredictable natural disaster.

Interpretable ML Systems

The best-performing models in many domains — e.g., deep neural networks for image and speech recognition — are obviously quite complex. These are considered “blackbox models,” and their predictions can be difficult, if not impossible, for them to explain.

Liang and his team are working to interpret these models by researching how a particular training situation leads to a prediction. As Liang explains, “Machine learning algorithms take training data and produce a model, which is used to predict on new inputs.”

This type of observation becomes increasingly important as AIs take on more complex tasks – think life or death situations, such as interpreting medical diagnoses. “If the training data has outliers or adversarially generated data,” says Liang, “this will affect (corrupt) the model, which will in turn cause predictions on new inputs to be possibly wrong. Influence functions allow you to track precisely the way that a single training point would affect the prediction on a particular new input.”

Essentially, by understanding why a model makes the decisions it makes, Liang’s team hopes to improve how models function, discover new science, and provide end users with explanations of actions that impact them.

Another aspect of Liang’s research is ensuring that an AI understands, and is able to communicate, its limits to humans. The conventional metric for success, he explains, is average accuracy, “which is not a good interface for AI safety.” He posits, “what is one to do with an 80 percent reliable system?”

Liang is not looking for the system to have an accurate answer 100 percent of the time. Instead, he wants the system to be able to admit when it does not know an answer. If a user asks a system “How many painkillers should I take?” it is better for the system to say, “I don’t know” rather than making a costly or dangerous incorrect prediction.

Liang’s team is working on this challenge by tracking a model’s predictions through its learning algorithm — all the way back to the training data where the model parameters originated.

Liang’s team hopes that this approach — of looking at the model through the lens of the training data — will become a standard part of the toolkit of developing, understanding, and diagnosing machine learning. He explains that researchers could relate this to many applications: medical, computer, natural language understanding systems, and various business analytics applications.

“I think,” Liang concludes, “there is some confusion about the role of simulations — some eschew it entirely and some are happy doing everything in simulation. Perhaps we need to change culturally to have a place for both.”

In this way, Liang and his team plan to lay a framework for a new generation of machine learning algorithms that work reliably, fail gracefully, and reduce risks.

This article is part of a Future of Life series on the AI safety research grants, which were funded by generous donations from Elon Musk and the Open Philanthropy Project.