Towards a Code of Ethics in Artificial Intelligence with Paula Boddington

AI promises a smarter world – a world where finance algorithms analyze data better than humans, self-driving cars save millions of lives from accidents, and medical robots eradicate disease. But machines aren’t perfect. Whether an automated trading agent buys the wrong stock, a self-driving car hits a pedestrian, or a medical robot misses a cancerous tumor – machines will make mistakes that severely impact human lives.

Paula Boddington, a philosopher based in the Department of Computer Science at Oxford, argues that AI’s power for good and bad makes it crucial that researchers consider the ethical importance of their work at every turn. To encourage this, she is taking steps to lay the groundwork for a code of AI research ethics.

Codes of ethics serve a role in any field that impacts human lives, such as in medicine or engineering. Tech organizations like the Institute for Electronics and Electrical Engineers (IEEE) and the Association for Computing Machinery (ACM) also adhere to codes of ethics to keep technology beneficial, but no concrete ethical framework exists to guide all researchers involved in AI’s development. By codifying AI research ethics, Boddington suggests, researchers can more clearly frame AI’s development within society’s broader quest of improving human wellbeing.

To better understand AI ethics, Boddington has considered various areas including autonomous trading agents in finance, self-driving cars, and biomedical technology. In all three areas, machines are not only capable of causing serious harm, but they assume responsibilities once reserved for humans. As such, they raise fundamental ethical questions.

“Ethics is about how we relate to human beings, how we relate to the world, how we even understand what it is to live a human life or what our end goals of life are,” Boddington says. “AI is raising all of those questions. It’s almost impossible to say what AI ethics is about in general because there are so many applications. But one key issue is what happens when AI replaces or supplements human agency, a question which goes to the heart of our understandings of ethics.”

The Black Box Problem

Because AI systems will assume responsibility from humans – and for humans – it’s important that people understand how these systems might fail. However, this doesn’t always happen in practice.

Consider the Northpointe algorithm that US courts used to predict reoffending criminals. The algorithm weighed 100 factors such as prior arrests, family life, drug use, age and sex, and predicted the likelihood that a defendant would commit another crime. Northpointe’s developers did not specifically consider race, but when investigative journalists from ProPublica analyzed Northpointe, it found that the algorithm incorrectly labeled black defendants as “high risks” almost twice as often as white defendants. Unaware of this bias and eager to improve their criminal justice system, states like Wisconsin, Florida, and New York trusted the algorithm for years to determine sentences. Without understanding the tools they were using, these courts incarcerated defendants based on flawed calculations.

The Northpointe case offers a preview of the potential dangers of deploying AI systems that people don’t fully understand. Current machine-learning systems operate so quickly that no one really knows how they make decisions – not even the people who develop them. Moreover, these systems learn from their environment and update their behavior, making it more difficult for researchers to control and understand the decision-making process. This lack of transparency – the “black box” problem – makes it extremely difficult to construct and enforce a code of ethics.

Codes of ethics are effective in medicine and engineering because professionals understand and have control over their tools, Boddington suggests. There may be some blind spots – doctors don’t know everything about the medicine they prescribe – but we generally accept this “balance of risk.”

“It’s still assumed that there’s a reasonable level of control,” she explains. “In engineering buildings there’s no leeway to say, ‘Oh I didn’t know that was going to fall down.’ You’re just not allowed to get away with that. You have to be able to work it out mathematically. Codes of professional ethics rest on the basic idea that professionals have an adequate level of control over their goods and services.”

But AI makes this difficult. Because of the “black box” problem, if an AI system sets a dangerous criminal free or recommends the wrong treatment to a patient, researchers can legitimately argue that they couldn’t anticipate that mistake.

“If you can’t guarantee that you can control it, at least you could have as much transparency as possible in terms of telling people how much you know and how much you don’t know and what the risks are,” Boddington suggests. “Ethics concerns how we justify ourselves to others. So transparency is a key ethical virtue.”

Developing a Code of Ethics

Despite the “black box” problem, Boddington believes that scientific and medical communities can inform AI research ethics. She explains: “One thing that’s really helped in medicine and pharmaceuticals is having citizen and community groups keeping a really close eye on it. And in medicine there are quite a few “maverick” or “outlier” doctors who question, for instance, what the end value of medicine is. That’s one of the things you need to develop codes of ethics in a robust and responsible way.”

A code of AI research ethics will also require many perspectives. “I think what we really need is diversity in terms of thinking styles, personality styles, and political backgrounds, because the tech world and the academic world both tend to be fairly homogeneous,” Boddington explains.

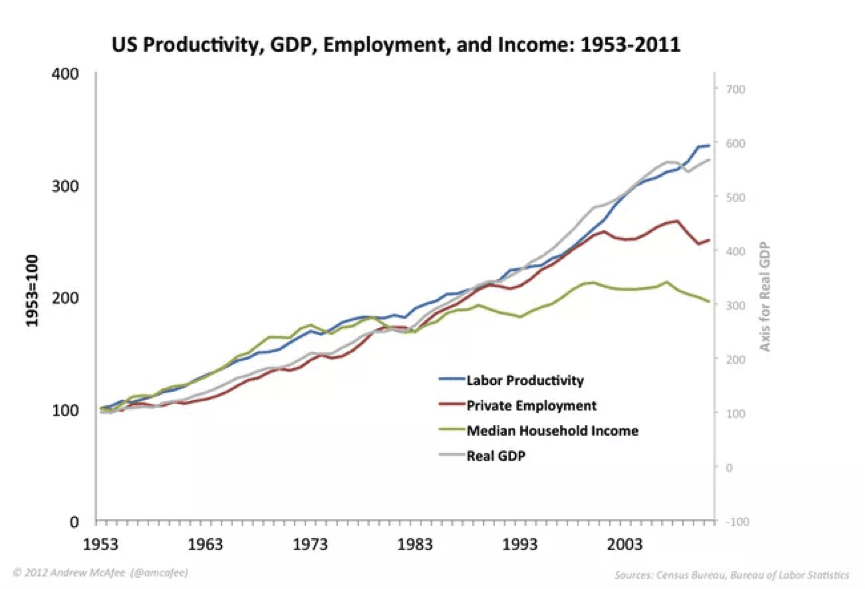

Not only will diverse perspectives account for different values, but they also might solve problems better, according to research from economist Lu Hong and political scientist Scott Page. Hong and Page found that if you compare two groups solving a problem – one homogeneous group of people with very high IQs, and one diverse group of people with lower IQs – the diverse group will probably solve the problem better.

Laying the Groundwork

This fall, Boddington will release the main output of her project: a book titled Towards a Code of Ethics for Artificial Intelligence. She readily admits that the book can’t cover every ethical dilemma in AI, but it should help demonstrate how tricky it is to develop codes of ethics for AI and spur more discussion on issues like how codes of professional ethics can deal with the “black box” problem.

Boddington has also collaborated with the IEEE Global Initiative for Ethical Considerations in Artificial Intelligence and Autonomous Systems, which recently released a report exhorting researchers to look beyond the technical capabilities of AI, and “prioritize the increase of human wellbeing as our metric for progress in the algorithmic age.”

Although a formal code is only part of what’s needed for the development of ethical AI, Boddington hopes that this discussion will eventually produce a code of AI research ethics. With a robust code, researchers will be better equipped to guide artificial intelligence in a beneficial direction.

This article is part of a Future of Life series on the AI safety research grants, which were funded by generous donations from Elon Musk and the Open Philanthropy Project.

Publications

- Boddington, Paula. EPSRC Principles of Robotics: Commentary on safety, robots as products, and responsibility. Ethical Principles of Robotics, special issue, 2016.

- Boddington, Paula. The Distinctiveness of AI Ethics, and Implications for Ethical Codes. Presented at IJCAI-16 Workshop 6 Ethics for Artificial Intelligence, New York, July 2016.

Workshops

- A day of ethical AI at Oxford: June 8, 2016. Oxford Martin School.

- The goal of the workshop was collaborative discussion between those working in AI and ethics and related areas, between geographically close and linked centres. Participants were invited from the Oxford Martin School, The Future of Humanity Institute, the Cambridge Centre for the Study of Existential Risk, and the Leverhulme Centre for the Future of Intelligence, plus others. Participants included FLI grantholders. This workshop included participants from diverse disciplines, including computing,philosophy and psychology, to facilitate cross disciplinary conversation and understanding.

- EPSRC Systems-Net Grand Challenge Workshop, “Ethics in Autonomous Systems”: November 25, 2015. Sheffield University.

- Paula Boddington attended and contributed to discussions.

- AISB workshop on Principles of Robotics: April 4, 2016. Sheffield University.

- Workshop examined the EPSRC (Engineering and Physical Sciences Research Council) Principles of Robotics. Boddington presented a paper, “Commentary on responsibility, product design and notions of safety”, and contributed to discussion.

- Outcome of workshop: Paper for Special Issue of Connection Science on Ethical Principles of Robotics, ‘EPSRC principles of robotics: Commentary on Safety, Robots as Products, and Responsibility” – Paula Boddington

- Ethics for Artificial Intelligence: July 9, 2016. NY

- These researchers organized an IJCAI-16 workshop that focused on issues of the law and autonomous vehicles, the ethics of autonomous trading systems, and superintelligence.

Ongoing Projects

- IEEE Global Initiative for Ethical Considerations in the Design of Autonomous Systems:

- Paula Boddington has been working with this initiative. She also served time on the LAWS (Lethal Autonomous Weapons) sub-committee, and is on the Ecosystem Mapping Committee.

- These researchers were invited to guest-edit a Special Edition of Minds and Machines on issues of the law and autonomous vehicles, the ethics of autonomous trading systems, and superintelligence. It will be published in 2017.